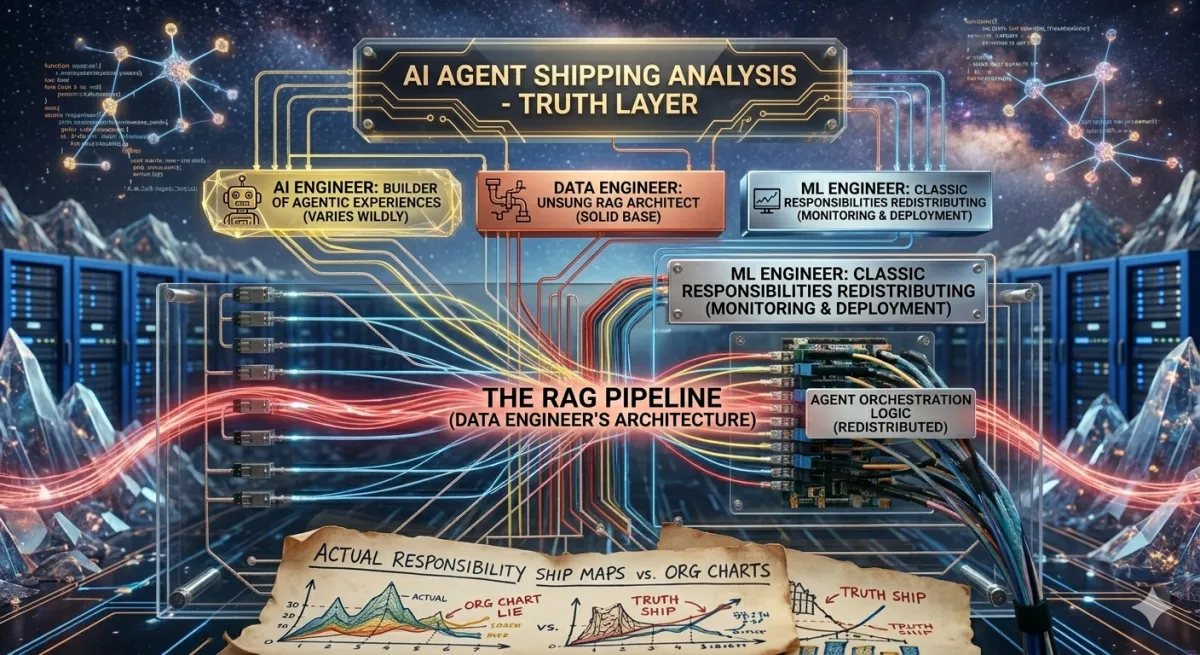

AI Engineer vs Data Engineer vs MLE: Who Actually Ships Agentic Systems?

Org charts are lying to you. The job title says “AI Engineer” but the work varies wildly. Data engineers are becoming the unsung architects of RAG pipelines. And ML engineers are watching their classic responsibilities redistribute. Here’s the honest picture.

Career | Roles | Agentic AI | April 2026 ~16 min read

The title that means nothing

A confession: “AI Engineer” doesn’t mean the same thing at any two companies. At one company it means fine-tuning open-source LLMs. At another it means writing YAML for MLflow. At a third it means building agentic orchestration pipelines from scratch. Job boards are useless here — the title “AI Engineer” appears on listings that span the entire stack from research to product.

The same murkiness affects data engineers and ML engineers. The arrival of RAG pipelines, agentic systems, and managed agent infrastructure has redistributed responsibilities across all three roles in ways that haven’t settled yet. Some data engineers now own more of the AI product stack than the people with “AI” in their title. Some ML engineers are watching their core work get abstracted away by managed services. Some AI engineers are doing what used to require an entire data platform team.

This article tries to give you the honest org chart, not the aspirational one.

The three roles, honestly

| Role | Core Identity | Classic Responsibilities |

|---|---|---|

| Data Engineer | The infrastructure owner | ETL/ELT pipelines, data warehouses + lakes, data quality + reliability, streaming infrastructure, access control + governance |

| ML Engineer | The model production owner | Training pipelines, model evaluation + evals, model serving infrastructure, feature stores, MLOps + drift monitoring |

| AI Engineer | The LLM integration owner | LLM API integration, prompt/agent engineering, RAG pipeline design, tool + harness design, guardrails + output validation |

In a pre-agentic organization, these roles had relatively clean handoffs. Data engineers built the pipelines. ML engineers trained models on the output. AI engineers integrated those models into products. The interfaces between them were well-defined and stable.

That clarity is gone. Agentic systems don’t respect those handoff points. An agent that reads a database needs data engineering skills to do it well. An agent that calls a fine-tuned model needs ML engineering to evaluate whether the model is behaving. An AI engineer building the agent harness needs to understand both.

The realistic org chart in 2026

What do these roles actually look like side-by-side in a team shipping an agentic system? Here’s the breakdown across three organizational contexts.

Startup / small team (3-8 engineers)

| Data Engineer | ML Engineer | AI Engineer |

|---|---|---|

| Builds document ingestion pipeline | Often doesn’t exist separately | Builds agent harness |

| Owns chunking strategy | Eval framework owned by AI eng | Prompt + tool design |

| Manages vector DB (Pinecone / Weaviate) | Fine-tuning if needed | Integrates with retrieval layer |

| Writes embedding pipelines | Performance benchmarking | Owns output validation |

| Sets up retrieval monitoring | Ships user-facing product |

Mid-size company (50-500 engineers)

| Data Engineer | ML Engineer | AI Engineer |

|---|---|---|

| Owns data platform (Snowflake / Databricks) | Eval harness + LLM benchmarks | Agent harness architecture |

| RAG ingestion pipelines | Fine-tuning pipelines (if any) | MCP / tool integration |

| Embedding pipeline infrastructure | Model serving (vLLM / TGI) | HITL design |

| Data governance for AI inputs | Latency + cost monitoring | Safety + guardrail systems |

| Semantic search infrastructure | Drift detection for agent behavior | Works with both DE + MLE teams |

Enterprise (500+ engineers)

| Data Engineer | ML Engineer | AI Engineer |

|---|---|---|

| Enterprise data platform | Internal fine-tuning platform | Platform-level agent infrastructure |

| AI data governance (lineage, access) | Eval frameworks at scale | Agent standards + tooling |

| Knowledge graph infrastructure | Inference optimization | Cross-product orchestration |

| Cross-team data contracts | Red-teaming + adversarial testing | Vendor evaluation (Managed Agents, etc.) |

| Compliance + audit trails | Custom model deployment | Safety policy implementation |

How agentic systems change everything

Before agentic systems, each role had a clean primary output. Data engineers shipped pipelines. ML engineers shipped models. AI engineers shipped integrations. Agentic systems break this because the agent’s output quality depends on all three — simultaneously and continuously.

Here’s what actually changes for each role:

| Role | Classic Responsibility | What Agentic Systems Add | Net Effect |

|---|---|---|---|

| Data Engineer | Batch ETL, data warehouse, dashboards | Document ingestion, chunking strategy, vector DB, embedding pipelines, retrieval quality monitoring | Strategic — data quality now directly affects agent output quality |

| ML Engineer | Train/serve custom models, MLOps, feature stores | LLM eval frameworks, behavior drift detection, agent performance benchmarking, RLHF for fine-tuning | Shifting — less training, more evaluation and LLM-specific ops |

| AI Engineer | LLM API wrappers, chatbots, simple integrations | Agent harness design, tool orchestration, memory architecture, safety systems, multi-agent coordination | Scope — from API caller to system architect |

The data engineer shift is the least-discussed and most impactful. When an agent retrieves information from a knowledge base, the quality of that retrieval is a data engineering problem — chunking strategy, embedding model choice, index architecture, reranking. The AI engineer builds the harness that calls the retrieval system. The data engineer determines whether the retrieval system gives the agent anything useful to work with.

A brilliant agent harness on top of a poorly designed RAG pipeline produces confident, fluent, wrong answers. Most “hallucination” complaints in enterprise RAG deployments are actually data quality problems in disguise.

“Most agentic AI failures are engineering and architecture failures, not model failures. The data layer is where most of them originate.” — Practitioners in agentic systems, 2026

Who actually ships the agent — a realistic story

In the companies actually shipping production agentic systems right now, the answer is usually: all three roles, none of them feeling like they own it clearly. Which creates interesting friction.

The AI engineer thinks the data engineer is too slow with the ingestion pipeline. The data engineer thinks the AI engineer is asking for the impossible — “make every document retrievable with 90% accuracy” without specifying what “accurate” means. The ML engineer is trying to run evals on a system that changes every time the data engineer updates the index and the AI engineer updates the prompt, making evaluation results non-comparable.

The organizations that ship fastest have solved for one thing: clear contracts between the layers. The data engineering layer owns a defined API (retrieval quality metric, latency SLA, data freshness guarantee). The ML engineering layer owns a defined eval harness (what “good” looks like for this agent, tested automatically). The AI engineering layer builds against those contracts without owning the implementation details underneath.

Managed agent services like Claude Managed Agents are attempting to collapse the boundaries further — handling much of the harness layer so AI engineers focus on task definition and guardrails. That shift is real. It doesn’t eliminate the roles; it raises the floor of what each person can assume is handled.

The data engineer pivot: a concrete roadmap

Data engineers are better positioned than almost anyone to move into AI engineering roles. The hard skills transfer directly — pipeline thinking, data quality obsession, infrastructure ownership. The gap is at the application layer: prompts, agents, harness design, evals.

Here’s a concrete 5-month progression. Not a course list — an actual skill acquisition path with specific deliverables at each stage.

Month 1: Build a RAG pipeline from scratch — no LangChain. Write the embedding pipeline yourself using the OpenAI or Anthropic embeddings API directly. Build chunking logic. Set up Pinecone or Qdrant. Implement basic similarity search. Query it with an LLM. Understand what breaks and why. Starting with a framework means you don’t understand the pieces that will fail on you in production. Deliverable: working RAG system you built cell-by-cell.

Month 2: Build and measure an eval framework. Retrieval quality (precision@k, recall@k). Generation quality (RAGAS or a custom rubric). Hallucination rate (compare generated answers to source documents programmatically). Learn why LLM outputs are non-deterministic and why this makes evals hard. This is the skill most tutorials skip — and the one that separates engineers who can build agents from engineers who can ship agents. Deliverable: automated eval pipeline with a dashboard.

Month 3: Build a simple agent harness with tool calling. Implement the ReAct loop manually (Thought -> Action -> Observation -> repeat). Add two tools: one that queries your RAG pipeline, one that calls an external API. Add basic error handling. Add a permission layer for the external API call. Understand how context accumulates in multi-turn agent loops. Your data pipeline background makes this easier — you already think in terms of stages and checkpoints. Deliverable: working agent that uses your RAG system as a tool.

Month 4: Deploy it and instrument it. Containerize with Docker. Deploy to AWS/GCP/Azure. Add logging for every LLM call (inputs, outputs, latency, token counts). Add retrieval logging. Set up alerts for hallucination rate above threshold. This is where your data engineering background is a genuine advantage — most AI engineers are weaker at the observability layer than you are. Instrument everything. You’ll need it when something breaks silently at 3 a.m. Deliverable: deployed agent with full observability.

Month 5: Learn MCP and the managed agent ecosystem. Set up a local MCP server. Expose one of your data tools via MCP. Connect it to Claude Code. Experiment with Claude Managed Agents beta if you have access. Study the Claude Code harness patterns (tool search, context compaction, CLAUDE.md). Understand what Managed Agents abstracts vs what you still own. This puts you at the current frontier — the people who understand both the plumbing and the abstractions over it are the ones getting hired right now. Deliverable: MCP-connected agent, writeup of what Managed Agents handles vs what you own.

What you keep, what you learn, what you can deprioritize

For a data engineer making this pivot:

| Keep from your DE background | New skills to acquire |

|---|---|

| Pipeline architecture thinking | LLM API integration patterns |

| Data quality obsession | Prompt engineering + system prompts |

| Infrastructure ownership | Agent harness design (ReAct loop) |

| SQL + Python proficiency | Vector DB + embedding pipelines |

| Distributed systems intuition | LLM eval frameworks |

| Observability / monitoring | Tool calling + MCP |

| Guardrails + output validation | |

| Context management patterns |

The salary differential for making this transition is real — LLM-focused engineers are being paid 25-40% more than generalist data practitioners at comparable experience levels in 2026, reflecting both production skills and genuine scarcity. But the primary reason to make the pivot is that the work is more interesting. Agentic systems are the current frontier of what software can do, and data engineers — with their pipeline intuition and infrastructure ownership mindset — are unusually well-suited to build them well.

If you can answer “what percentage of my agent’s failures are retrieval failures vs generation failures vs tool failures?” — you’re thinking like an AI engineer, not just a data engineer. That question requires evals (ML engineering), retrieval instrumentation (data engineering), and harness observability (AI engineering). The role convergence is real. The people who own all three layers are the most effective builders of agentic systems right now.

The bottom line

There’s no clean answer to “who ships agentic systems?” because the question assumes cleaner role boundaries than actually exist. The honest answer: teams with data engineers who understand retrieval quality, ML engineers who can build eval frameworks, and AI engineers who can design a harness — all working against agreed contracts — ship agentic systems. Any one of those roles missing or unclear creates a specific class of failure.

The good news for data engineers considering the pivot: you own the layer that has the most leverage on overall system quality, and you already have the skills that most AI engineers are weakest at. The gap to close is real but finite. Five months of focused, project-based work is a plausible timeline to start getting hired into AI engineering roles with a data engineering background.

The bad news: “AI Engineer” still doesn’t mean the same thing at any two companies. Do the due diligence on what the role actually owns before you accept the title.

Sources

- Neil Dave — Role distinction analysis, April 2026

- NuCamp — AI vs ML engineer comparison, 2026

- Udacity — Agentic AI engineer defined, 2026

- DigitalDefynd — Data engineer vs AI engineer, 2026

- Vettio — Salary data, February 2026

If you’re a data engineer looking to understand the foundational patterns behind pipelines, data quality, and the lifecycle that makes agentic systems work, Fundamentals of Data Engineering by Joe Reis and Matt Housley covers the principles that transfer directly into AI engineering roles.

Disclaimer: This article is based on publicly available role analyses, salary reports, and community observations as of April 2026. The author has no affiliation with any of the companies or platforms mentioned. Salary figures and timelines are representative ranges sourced from multiple public reports — individual org structures vary significantly. The roadmap is designed for data engineers with 2+ years of experience and solid Python proficiency. This article contains affiliate links — purchasing through them supports this blog at no extra cost to you.

Comments

Loading comments...