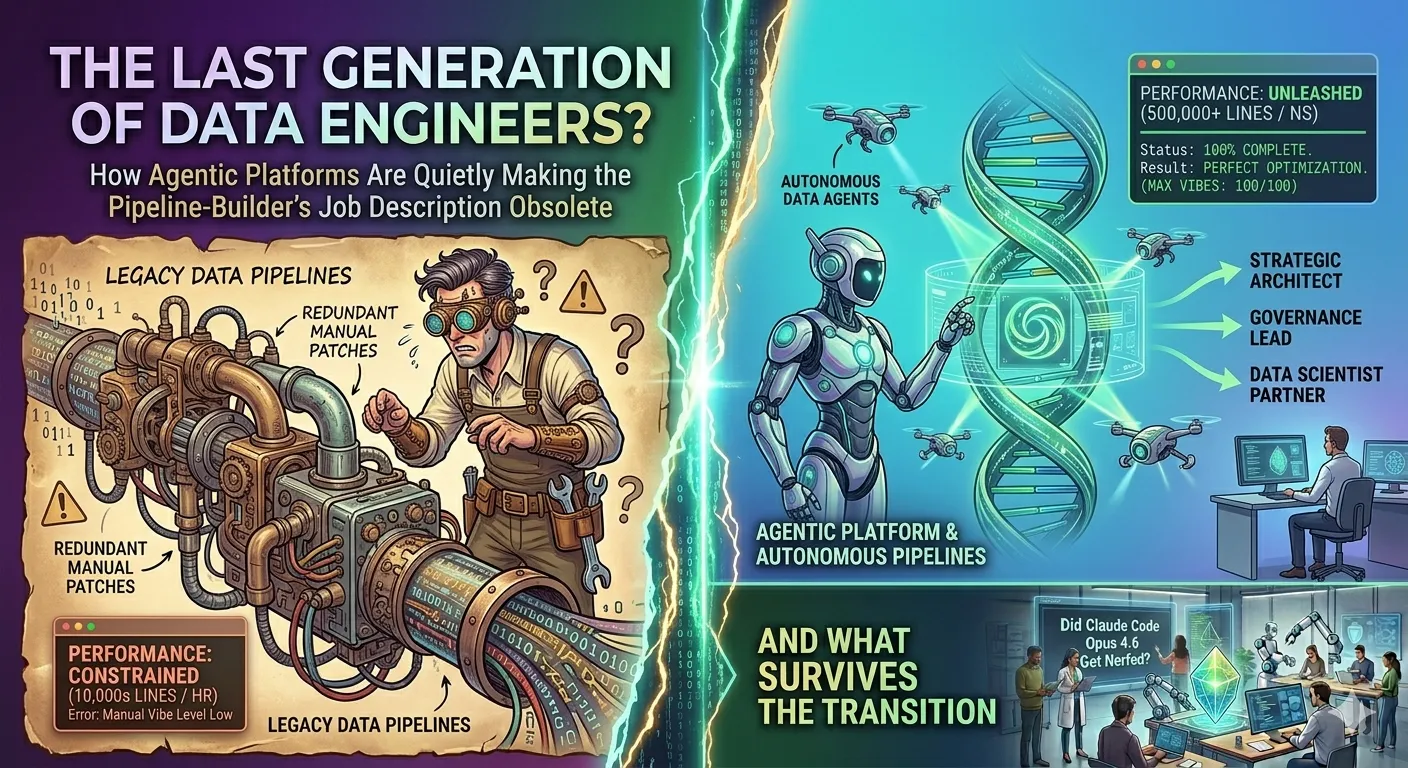

The Last Generation of Data Engineers?

How agentic platforms are quietly making the pipeline-builder’s job description obsolete — and what survives the transition.

Data Engineering | AI Agents | Opinion | April 2026

~12 min read

Disclaimer: This article is an independently written analysis inspired by themes from a LinkedIn piece on agentic AI and data engineering. All statistics cited are sourced from publicly available research as of early 2026. Projections about role displacement reflect industry research and are not guarantees of any specific outcome. Code examples are illustrative, not production-ready. Views expressed are analytical, not advisory.

The joke that stopped being funny

There’s a running joke among senior data engineers that goes something like this: every five years, someone declares that data engineers are about to be replaced. Hadoop engineers were going to be obsolete when Spark arrived. ETL developers were supposed to disappear when dbt showed up. And yet, the job boards kept filling up, the salaries kept climbing, and the Slack channels stayed chaotic.

This time feels different. Not because the technology is louder or the hype is bigger — but because the direction of the shift has changed. Previous waves of tooling made data engineers more productive. Agentic AI, the breed of autonomous, goal-directed AI systems now entering production at serious organizations, is making data engineers less necessary for the work they’ve always done.

That’s not a catastrophe. But it is a genuine inflection point — and one worth understanding clearly, without either the panic or the cheerleading that tends to follow these conversations.

“Previous waves of tooling made data engineers more productive. Agentic AI is making them less necessary for the work they’ve always done.”

What data engineers actually spend their time on

Before talking about what’s being automated away, it helps to be honest about what the job actually involves in most organizations. Not the idealized version from job postings — the real one.

A typical data engineer’s week breaks down roughly like this: building and debugging ingestion pipelines, wrangling schema drift, writing and maintaining transformation logic in SQL or Python, triaging broken DAGs, updating documentation nobody reads, and sitting in meetings explaining why the dashboard numbers don’t match. Sprinkled in, if they’re lucky, is some actual architecture work — designing systems, evaluating tools, thinking about scalability.

The uncomfortable truth is that the first four categories — the pipeline plumbing — make up the majority of time at most companies. And that is exactly the category that agentic systems are now targeting.

| Metric | Value | Source |

|---|---|---|

| US work hours estimated automatable in the next decade | ~30% | McKinsey, 2024 |

| Productivity gains reported by teams using AI-orchestrated automation | 10-100x | Coalesce, 2026 |

| Routine pipeline tasks now automatable by current-generation AI agents | 60%+ | Industry estimates |

What “agentic” actually means (and why it matters here)

The word “agentic” gets thrown around loosely enough that it’s worth pinning down. An AI agent isn’t just a chatbot that answers questions about your schema. It’s a system that can perceive a state, form a goal, take a sequence of actions, observe the results, and iterate — without being walked through each step by a human.

In practical data engineering terms, that looks like a system that notices a pipeline has started failing because an upstream API changed its response format, infers the correct schema update, rewrites the transformation, runs a validation suite, and either deploys the fix or escalates to a human — depending on confidence level. No ticket, no Slack ping at 2am, no postmortem slide deck.

That’s not science fiction in 2026. It’s what platforms like Maia (built on Matillion’s infrastructure) claim to do, and what the broader ecosystem — dbt Cloud’s AI features, Databricks’ AI/BI, Astronomer’s Astro — is converging on.

How an agentic pipeline loop works:

- Perceive: Agent monitors pipeline metadata, data quality metrics, schema registry, and upstream source signals continuously

- Decide: Using LLM-backed reasoning over data lineage and business context, it determines what action to take — fix, alert, or escalate

- Act: Executes the change within pre-defined guardrails (governance boundaries, cost limits, data sensitivity rules)

- Learn: Outcome is logged to experiential memory; future decisions become more accurate for similar patterns

The pipeline that fixes itself

Self-healing pipelines are the clearest illustration of where this is headed. The basic pattern involves agents continuously monitoring data assets for freshness, completeness, logic drift, and anomalies — not just at build time, but perpetually. When something breaks, the agent doesn’t just surface an alert; it diagnoses the cause and attempts resolution.

Here’s a simplified version of what that orchestration code looks like:

# Simplified agentic pipeline monitor

# Not production-ready -- illustrative only

from agents import PipelineAgent, MemoryStore, GovernanceGuardrails

from tools import SchemaInspector, DataQualityRunner, SlackNotifier

memory = MemoryStore(backend="vector_db")

guardrails = GovernanceGuardrails(

max_cost_per_action=0.50,

require_human_approval_above="HIGH",

data_sensitivity=["PII", "FINANCIAL"]

)

agent = PipelineAgent(

name="pipeline-watchdog",

tools=[SchemaInspector, DataQualityRunner, SlackNotifier],

memory=memory,

guardrails=guardrails,

model="claude-sonnet-4"

)

async def monitor_loop(pipeline_id: str):

while True:

state = await agent.perceive(pipeline_id)

if state.anomaly_detected:

diagnosis = await agent.reason(

context=state,

instruction="Diagnose root cause and propose minimal fix."

)

if diagnosis.confidence >= 0.85:

# Agent acts within guardrails

result = await agent.act(diagnosis.proposed_fix)

await memory.log(state, diagnosis, result)

else:

# Escalate to human with full context

await SlackNotifier.escalate(

summary=diagnosis.explanation,

options=diagnosis.candidate_fixes

)

await sleep(300) # Check every 5 minutesThe key line here isn’t the AI call. It’s the confidence threshold. At 85% or above, the agent acts. Below that, a human gets a structured escalation with the diagnosis already done. That’s the actual design pattern — not “replace the engineer” but “compress the human’s required attention to the genuinely ambiguous 15%.”

For teams managing hundreds of pipelines, that compression is enormous.

What’s actually getting automated — and what isn’t

It’s tempting to describe this as a binary: either AI takes over or it doesn’t. Reality is messier. Different parts of the data engineering workflow are at very different stages of automation maturity.

| Task | Traditional Approach | Agentic Approach |

|---|---|---|

| Schema drift handling | Manual detection, ticket, engineer fixes | Agent detects, proposes fix, applies if within guardrails |

| Pipeline incident triage | On-call engineer, runbook, Slack chaos | Agent diagnoses, self-heals or escalates with full context |

| Data quality validation | dbt tests, manual threshold tuning | Continuous anomaly detection; adaptive thresholds |

| Transformation authoring | Engineer writes SQL/Python from spec | Agent generates from business intent; engineer reviews |

| Data cataloging | Manually maintained, always stale | Agents auto-tag, enrich, and keep catalog current |

| System architecture design | Engineer + architect, whiteboard sessions | Still human — requires business judgment, trade-offs, org context |

| Governance and compliance | Manual audits, periodic reviews | Agents enforce policies in real-time; humans set the policies |

Notice the last two rows. Architecture and governance are not disappearing into automation — they’re becoming the primary job. The work that requires contextual judgment, organizational understanding, and ethical accountability is the work that grows in proportion as routine execution shrinks.

The real-time shift nobody talks about

Agentic AI systems don’t just change how pipelines are maintained — they change what pipelines need to look like. Traditional batch ETL was designed around a world where humans reviewed outputs on a schedule. Agents need something closer to a live nervous system: real-time streaming infrastructure that agents can monitor, react to, and act on continuously.

This is driving a significant architectural shift. Kafka and Flink are no longer niche choices for “real-time use cases.” They’re becoming baseline infrastructure for any platform that wants to support agentic workflows. The data engineer who understands stream processing, event-driven architectures, and low-latency data contracts is going to find their skills dramatically more in demand — not less.

# Agent subscribes to quality events in real-time

from confluent_kafka import Consumer

import json

config = {

'bootstrap.servers': 'kafka:9092',

'group.id': 'pipeline-agent-group',

'auto.offset.reset': 'latest'

}

consumer = Consumer(config)

consumer.subscribe(['data.quality.events', 'schema.change.events'])

while True:

msg = consumer.poll(1.0)

if msg is not None:

event = json.loads(msg.value().decode('utf-8'))

# Route to appropriate agent based on event type

await agent_router.dispatch(

event_type=event['type'],

payload=event['payload'],

priority=event.get('severity', 'LOW')

)“The engineer who understands stream processing and event-driven architectures is going to find their skills more in demand — not less.”

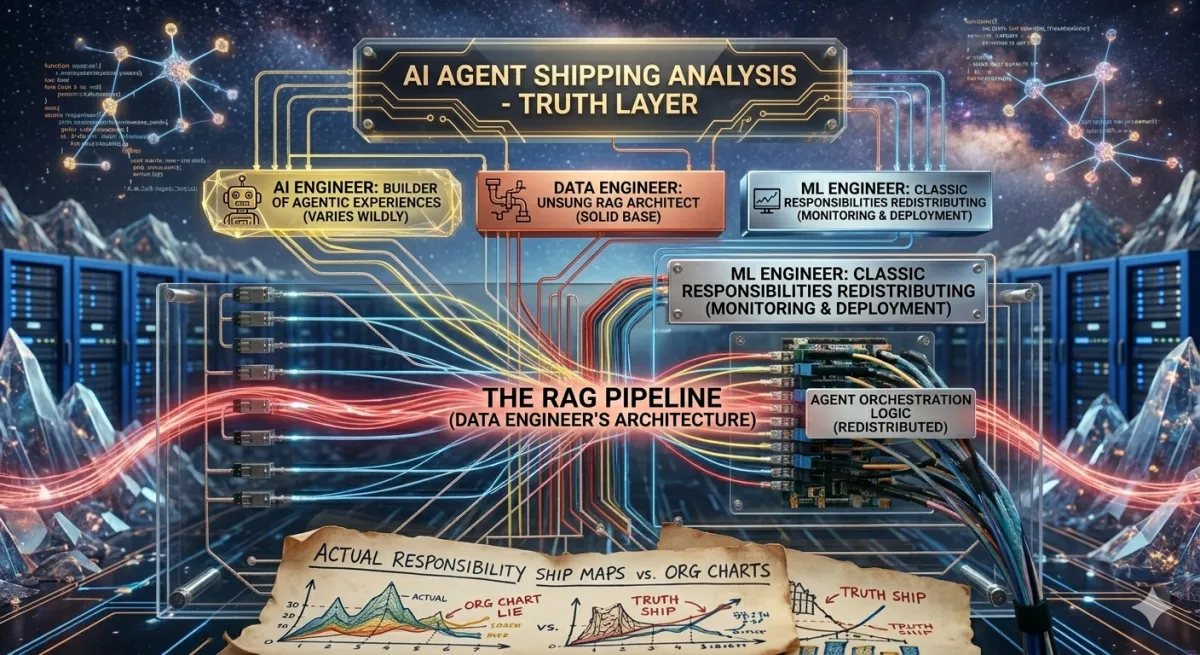

What LinkedIn figured out (and what it tells us)

LinkedIn’s approach to building agentic platforms is instructive because they’re not an AI startup — they’re a mature, large-scale platform that had to retrofit agentic capabilities onto existing distributed systems. Their architecture, described publicly in late 2025, is built around a stateless “agent life-cycle service” that coordinates agents, data sources, and applications — while keeping all state and memory outside the core service.

LinkedIn’s Distinguished Engineer Karthik Ramgopal noted that even when building agents, the underlying reality is still a user-facing application running on a large-scale distributed system. The insight: agents don’t replace distributed systems thinking — they add a reasoning layer on top of it.

That’s an important corrective to the narrative that agentic AI is some completely different paradigm. It isn’t. It’s messaging architectures with LLM brains attached. Engineers who understand the underlying distributed systems are still the ones designing whether those brains work correctly at scale.

The governance gap nobody has solved yet

Here’s the part of the agentic AI story that tends to get buried in the excitement: autonomous agents making decisions about data create governance nightmares at a speed humans can’t match.

If an agent automatically applies a schema migration to a table that turns out to contain PII regulated under GDPR, and that migration changes how data is retained — who is accountable? The agent can’t be. The engineer who deployed it? The data steward who approved the governance policy? The platform team?

The EU AI Act, which came into full effect in 2025, is going to force organizations to answer these questions concretely. High-risk AI systems — a category that could include automated data transformation at scale — require explainability, audit trails, and human oversight mechanisms. That is not a technical checkbox. It’s a design philosophy that has to be baked into agentic platforms from the start.

Governance checklist for agentic data platforms:

- Audit trails: Every autonomous action must be logged with the reasoning that produced it — not just what changed, but why the agent decided to act

- Confidence thresholds: Define explicit escalation boundaries. High-sensitivity data should always require human approval regardless of confidence score

- Rollback capability: Agent-applied changes must be reversible. Design for undo from day one

- Bias and drift detection: Agents trained on historical patterns can perpetuate bad data practices. Monitor for systematic errors, not just individual failures

- Regulatory mapping: Know which data assets fall under which regulations (GDPR, CCPA, EU AI Act) before agents are granted access to them

The data engineers who will be most valuable in a world of agentic platforms are the ones who can design these governance frameworks and who understand both the technical and the legal dimensions of automated data handling. That combination is genuinely rare today.

The skills that survive — and the ones that don’t

Let’s be direct about this, because vague optimism doesn’t help anyone planning a career.

Skills that are being compressed or automated: Writing boilerplate ingestion pipelines, debugging routine ETL failures, maintaining static data catalogs, basic SQL transformation authoring, and on-call triage for known failure modes. These are still worth knowing — they inform how you work with agentic systems — but they’re not going to be the primary job at organizations that have invested in modern platforms.

Skills that are expanding in value: Streaming architecture and event-driven system design. Agent orchestration — how you structure multi-agent workflows, define handoff protocols, and manage state. Data contract design, which becomes the interface between human intent and autonomous execution. Governance and compliance engineering. Semantic modeling, because agents need well-defined business context to make good decisions. And cost engineering, since agentic systems that call LLMs at every pipeline tick can generate surprisingly large bills.

The meta-skill underneath all of these is something like systems thinking crossed with product thinking. The data engineer of the next few years is closer to a platform product manager than to a plumber — defining what the automated systems should do, where they need guardrails, and how the outputs serve the business.

# contracts/user_events.yaml

# This is what agents read to understand data semantics

apiVersion: "v2"

kind: DataContract

metadata:

name: "user_events"

owner: "platform-team@company.com"

sensitivity: "PII" # Triggers stricter agent guardrails

regulation: ["GDPR", "CCPA"]

schema:

fields:

- name: "user_id"

type: "string"

pii: true

description: "Pseudonymised user identifier"

- name: "event_type"

type: "string"

allowed_values: ["click", "view", "purchase"]

- name: "timestamp"

type: "timestamp_tz"

freshness_sla: "5 minutes"

quality:

completeness_threshold: 0.99

max_null_rate: 0.01

# Agents auto-enforce these; violations trigger escalation

agent_permissions:

read: true

schema_migration: "require_human_approval"

deletion: "blocked"Data contracts like this one become the boundary layer between human governance and autonomous execution. The engineer’s job shifts from writing the transformation to writing the contract that constrains how AI can transform it. Subtle difference. Profound implications.

The honest assessment

Is this the last generation of data engineers in the sense that the role becomes extinct? No. The demand for people who can reason about data systems, build trustworthy platforms, and translate between business needs and technical execution isn’t going away.

But is this the last generation of data engineers in the sense that the current job description — the one where the primary skill is building and fixing pipelines by hand — describes a shrinking fraction of the actual work? Almost certainly yes.

The teams seeing 10-100x productivity gains aren’t writing more code. They’re designing better automation chains and then getting out of the way. The engineers who adapt to that shift — who move from being the person who runs the pipeline to being the person who designs the system that runs the pipeline — will be more valuable than ever.

Those who wait for the job to feel like it used to will find it feeling increasingly like maintenance work on a system that was designed for a different era.

“The teams seeing 10-100x productivity gains aren’t writing more code. They’re designing better automation chains and then getting out of the way.”

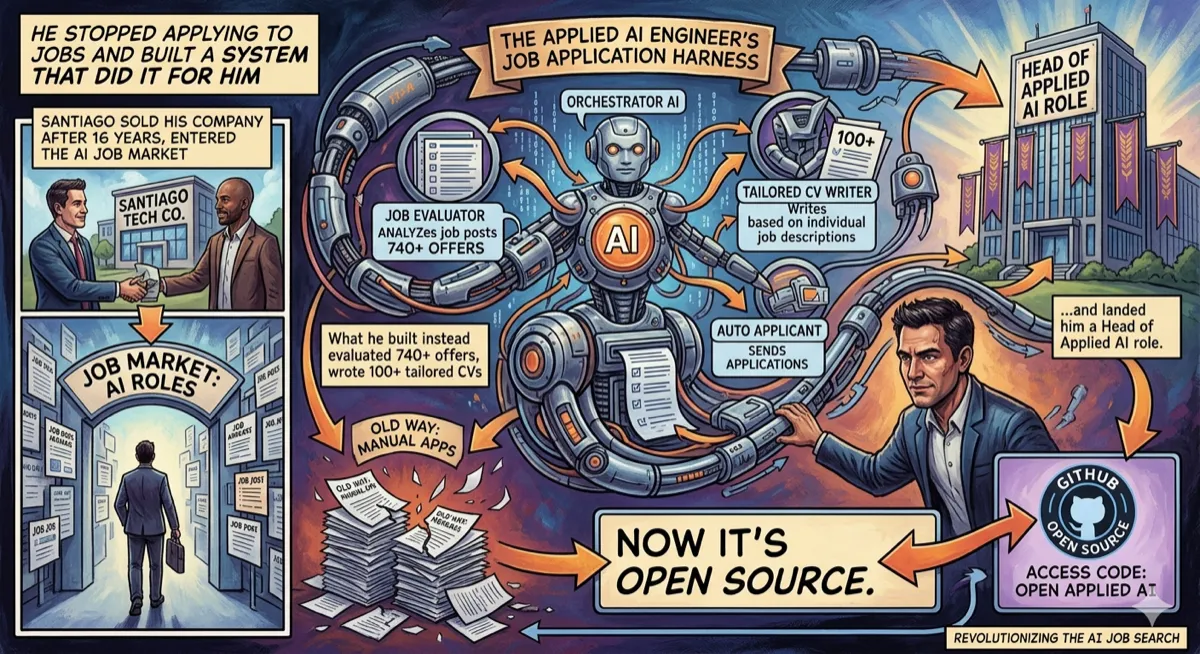

What to do if you’re a data engineer reading this

A few concrete directions worth investing time in, regardless of where the technology lands:

Learn how agents actually work — not just prompt engineering, but agent memory, tool use, multi-agent coordination, and the failure modes that come with each. The LangChain, LlamaIndex, and CrewAI ecosystems are good starting points, as is Anthropic’s documentation on building reliable agents.

Get serious about streaming — Apache Kafka, Apache Flink, or at minimum a solid understanding of event-driven architectures. This is the infrastructure that agentic platforms run on. It’s not glamorous, but it’s foundational.

Develop a governance vocabulary — understand GDPR, the EU AI Act, data contracts, and what “auditability” actually requires technically. Organizations are going to need engineers who can speak to regulators and to architects simultaneously.

Build something with an agentic loop — not a demo, but something that runs continuously, observes a real system, and takes autonomous action within defined limits. The gap between theoretical understanding and operational intuition about where agents break is only closeable through practice.

The transition is already underway. The organizations that have invested in agentic data platforms are running with meaningfully smaller data engineering teams than they had two years ago — and shipping faster. That data is going to land on every CTO’s desk eventually.

The question isn’t whether to adapt. It’s whether to get ahead of the wave or get sorted by it.

Recommended reading

If you’re thinking seriously about how data systems, distributed architectures, and automation patterns intersect, Fundamentals of Data Engineering by Joe Reis and Matt Housley is the most comprehensive foundation for understanding the full data lifecycle that agents are now automating.

Sources: The 30% automation estimate references McKinsey Global Institute research published in 2024 on generative AI and work automation. The 10-100x productivity figure is cited from Coalesce’s 2026 Data Trends report. LinkedIn’s agentic architecture details are drawn from publicly reported coverage in InfoWorld (September 2025). EU AI Act enforcement timeline reflects regulations that came into force in phases through 2025. Maia/Matillion platform capabilities are referenced from their publicly available product documentation (March 2026). All code examples are original and illustrative.

No financial, career, or technical advice is implied. Readers should evaluate their own situations independently. The author has no commercial relationship with any platform or vendor mentioned.

Comments

Loading comments...