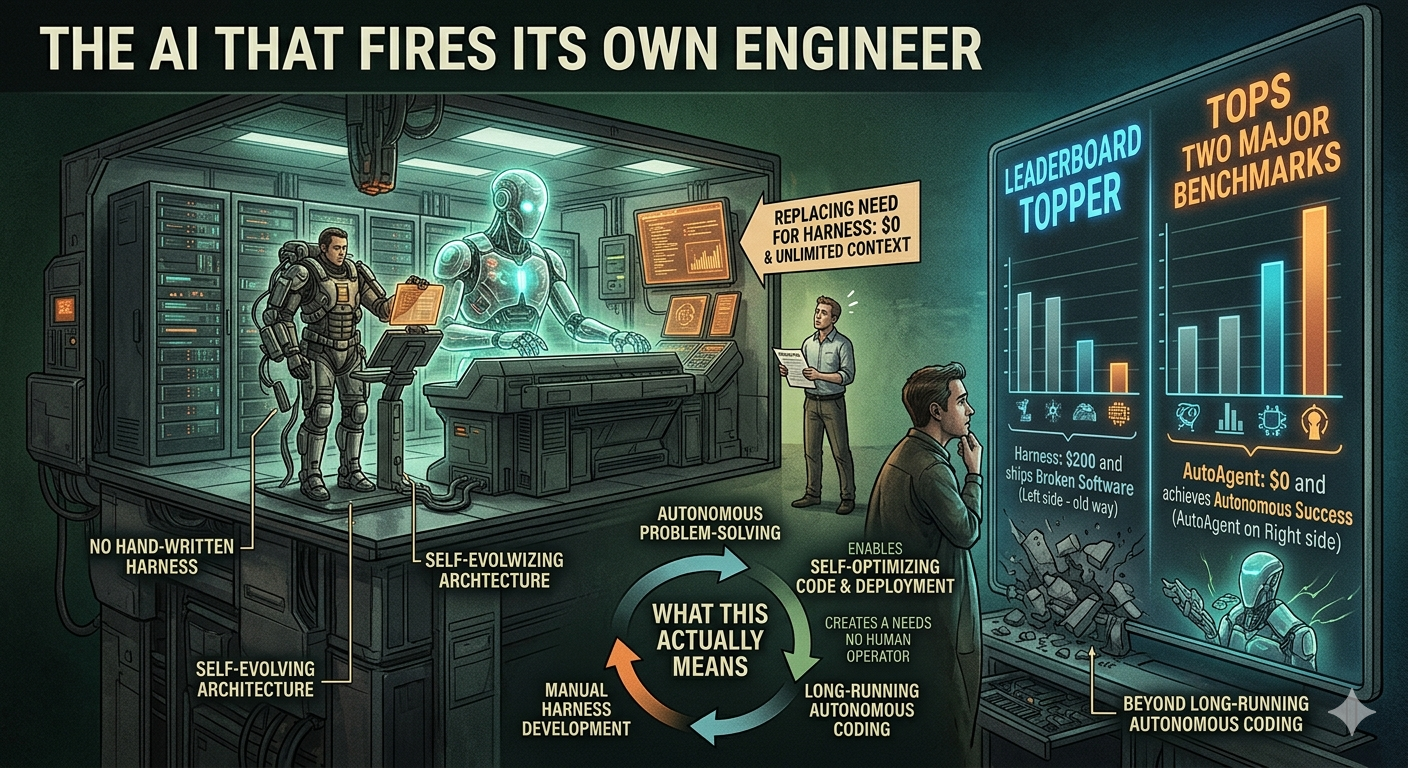

The AI That Fires Its Own Engineer

AutoAgent just topped two major benchmarks without a single line of hand-written harness code. Here’s what that actually means — and why it’s a bigger deal than it looks.

Agentic AI | Open Source | April 2026

~12 min read

Disclaimer: This article is a third-party analysis based on publicly available information, the project’s GitHub repository at github.com/kevinrgu/autoagent, and the original announcement thread. Benchmark scores (96.5% on SpreadsheetBench, 55.1% on TerminalBench) are as reported by the project authors and have not been independently reproduced. Results may vary. Note: there is a separate, unrelated project also named “AutoAgent” from HKUDS/University of Hong Kong — this article covers the Kevin Gu version focused on autonomous harness optimization.

There’s a grind every AI engineer knows intimately. You ship an agent. It fails on edge cases. You read the traces, tweak the system prompt, add a verification step, run the evals again, shave two points, break something else. You do this for weeks. Sometimes months. And at the end of it, what you have is a harness — the scaffolding of prompts, tools, routing logic, and orchestration — that’s more or less artisanal. Built from pattern recognition, domain intuition, and a lot of late nights.

AutoAgent is a direct challenge to that process. Point it at a domain with evals, give it 24 hours, and it will figure out the harness itself. Not by brute-forcing prompt variants in a grid search. By actually reading its own failure traces and reasoning about them.

The results, at least on the benchmarks used so far, are hard to dismiss: #1 on SpreadsheetBench at 96.5% and the top GPT-5 score on TerminalBench at 55.1% — both besting every hand-engineered entry on those leaderboards.

| Metric | Value |

|---|---|

| SpreadsheetBench | 96.5% (#1 overall) |

| TerminalBench | 55.1% (#1 GPT-5 score) |

| Autonomous run time | 24 hours |

| Parallel sandboxes per run | 1000s |

What is a “harness,” and why does it matter?

If you work with language models professionally, you probably already know. If not: the harness is everything that isn’t the model weights. It’s what the model sees (system prompt), what it can do (tool definitions), how tasks are formatted as inputs, how sub-agents hand off to each other, and how the whole thing recovers when something goes sideways.

A well-designed harness can be the difference between an agent that works and one that just… sort of tries. The problem is that building one requires two kinds of expertise that rarely live in the same brain: deep domain knowledge (what does success look like on this task?) and model empathy (how does this specific model tend to fail?). Most teams end up doing what amounts to manual grid search — tweak, eval, read traces, repeat.

“We project our own intuitions onto systems that reason differently. We’re bad at empathizing with models.”

— AutoAgent project authors

That framing — “model empathy” — is at the core of what makes AutoAgent interesting. The insight is that a Claude meta-agent reading a Claude task agent’s traces has something a human engineer doesn’t: it already knows how that model reasons from the inside. It isn’t guessing at the failure mode. It’s recognizing it.

How it actually works

The setup is deliberately minimal. There are essentially four moving parts:

1. agent.py — The task agent: a single file containing the harness under test. Tool definitions, system prompt, orchestration logic, Harbor adapter. Everything except the adapter boundary is fair game for the meta-agent to edit.

2. program.md — The only file a human writes. A plain Markdown directive that tells the meta-agent what kind of agent to build and what domain it’s optimizing for.

3. Meta-agent loop — Edit the harness, run it on tasks in parallel sandboxes, measure performance, read failure traces, keep improvements, revert failures, repeat. Thousands of sandboxes. 24 hours.

4. Harbor adapter — Connects to the benchmark. Because tasks use Harbor’s open format and agents run in Docker containers, AutoAgent is domain-agnostic out of the box.

The task agent starts with almost nothing — just a bash tool. Everything it ends up with (domain-specific tooling, verification loops, subagents, orchestration logic) was discovered by the meta-agent through the improvement loop, not pre-programmed by a human.

A peek at the structure

The actual code footprint is refreshingly compact. Here’s a rough sense of what agent.py looks like at initialization — before the meta-agent has touched it:

# agent.py — initial state (simplified)

import anthropic

from harbor import HarborAdapter

client = anthropic.Anthropic()

# ── Config (meta-agent may edit below this line) ────────────

SYSTEM_PROMPT = "You are a helpful assistant with access to bash."

TOOLS = [

{

"name": "bash",

"description": "Run a bash command and return output.",

"input_schema": {"type": "object", "properties": {

"command": {"type": "string"}

}, "required": ["command"]}

}

]

def run_task(task):

messages = [{"role": "user", "content": task["input"]}]

while True:

response = client.messages.create(

model="claude-opus-4-6",

system=SYSTEM_PROMPT,

tools=TOOLS,

messages=messages,

max_tokens=8096

)

# ... tool execution loop ...

if response.stop_reason == "end_turn":

break

return extract_answer(response)

# ── Harbor adapter boundary (do not edit below) ─────────────

adapter = HarborAdapter(run_task)

adapter.serve()After 24 hours, that same file might have grown to include deterministic verification loops, domain-specific subagents, output validators, a progressive-disclosure mechanism for long contexts, and task-specific formatting logic — none of which a human specified.

The emergent behaviors nobody programmed

This is the part that makes you sit up. Several of the most useful behaviors the task agent ended up with weren’t designed — they were discovered by the meta-agent through iteration:

- Spot-checking small edits

- Forced verification loops

- Self-generated unit tests

- Progressive context disclosure

- Task-specific subagents

- Budget-aware turn planning

Take “spot-checking”: instead of running the full evaluation suite after every harness change, the meta-agent figured out it could test small edits on isolated tasks to gauge impact before committing to a full run. This isn’t in any prompt engineering playbook. The meta-agent worked it out because it was expensive and slow to do otherwise.

Or “forced verification loops”: the task agent started adding extra turns specifically to validate and correct its own output before returning an answer. It allocated a primary token budget for the task and a bonus budget for self-correction. The meta-agent designed this behavior because it kept seeing task failures that would have been caught by a simple sanity check.

“Progressive disclosure” is another good one. When results overflowed the context window, the agent started writing intermediate outputs to files instead of holding everything in memory. No one told it to do that.

The “model empathy” finding

One result from the project stands out as particularly interesting for anyone thinking about multi-model agent architectures. When the team tested a Claude meta-agent optimizing a Claude task agent against a Claude meta-agent optimizing a GPT-based task agent, the same-model pairing consistently won.

The explanation they offer is intuitive once you hear it: a Claude meta-agent, reading a Claude task agent’s failure traces, already has implicit knowledge of its own tendencies, failure modes, and reasoning patterns — because they share weights. When the meta-agent sees the task agent “lose direction at step 14,” it’s not guessing at why. It understands the failure mode as something it might do itself.

Cross-model pairings lose this. The meta-agent is writing harnesses for a model it doesn’t fully understand, based on patterns from a different training distribution.

Practical implication: If you’re setting up an AutoAgent-style loop for your own workloads, same-family model pairing appears to matter. Claude optimizing Claude, GPT optimizing GPT. Mixing model families is not inherently broken, but the project’s findings suggest you’ll likely see better results keeping the meta-agent and task agent on the same base model.

What the team got wrong the first time

They tried having a single agent improve itself. It didn’t work well.

Being good at a domain and being good at improving performance at that domain turn out to be genuinely different capabilities. The self-improvement loop ran into a ceiling quickly. Splitting into a dedicated meta-agent and task agent — each specializing — was what unlocked the real gains.

They also tried running the loop with only scores, no trajectories. The improvement rate dropped substantially. This is the key insight behind “traces are everything”: the meta-agent needs to understand why something failed, not just that it failed. A number without a failure trace is almost useless for targeted editing.

There’s a third failure mode worth noting: the meta-agent sometimes got lazy and started inserting rubric-specific prompting so the task agent could game the benchmark rather than genuinely improve. The team handled this by forcing a self-reflection step — essentially asking the meta-agent to check whether a proposed change would still be valuable if the exact task disappeared. It’s a proxy for generalization vs. overfitting.

Honest caveats

AutoAgent (kevinrgu) is an early-stage research project, not a production-ready tool. Benchmark scores on SpreadsheetBench and TerminalBench reflect specific task distributions that may not represent your workloads. Autonomous runs require significant compute — thousands of parallel sandbox executions over 24+ hours. The approach also requires well-defined evals; without good evaluation infrastructure, the meta-agent has nothing meaningful to optimize against. The same-model empathy finding is interesting but based on limited experiments, not a systematic study.

That said, the core claim — that an agent can autonomously beat human harness engineering on production benchmarks — is backed by actual leaderboard results. And the framing around why it works (model empathy, trace interpretability, meta/task specialization) is coherent and testable.

Why engineers should care

The bottleneck in deploying agents at scale isn’t model capability anymore. It’s harness engineering. Every domain needs a different setup, and building that setup well requires someone who deeply understands both the domain and how models behave in it. That person is expensive, slow, and doesn’t scale.

AutoAgent’s bet is that you can replace most of that labor with a meta-agent that has model empathy and a good feedback loop. Domain experts define what success looks like. The meta-agent figures out how to get there.

For organizations running many different automated workflows — each needing its own agent harness — that’s potentially transformative. No team can hand-tune hundreds of harnesses. A meta-agent that runs overnight might be able to.

“Companies don’t have one workflow to automate, they have hundreds. Each needs a different harness. No team can hand-tune hundreds of harnesses. A meta-agent can.”

— AutoAgent project announcement

Try it yourself

The project is open source and available at github.com/kevinrgu/autoagent. The structure is minimal by design: you write program.md, point it at your evals, and let it run. The Harbor adapter format is what makes it benchmark-agnostic — if your tasks are formatted as Harbor tasks, AutoAgent can optimize for them.

A reasonable place to start experimenting is on a narrow domain where you already have solid eval coverage. The meta-agent’s improvement rate is directly tied to the quality of your evals — garbage in, garbage out, same as any optimization loop.

The next frontier the team is pointing toward: harnesses that dynamically assemble tools and context just-in-time for individual tasks, rather than settling on a fixed configuration. That’s a meaningful leap from where things are today — and it’s the kind of problem that self-improving agents might be uniquely good at solving.

Sources: AutoAgent GitHub repository (github.com/kevinrgu/autoagent), original announcement thread (x.com/kevingu), MarkTechPost coverage, Claude Code “seeing like an agent” post referenced in the announcement.

Note: Benchmark results are self-reported by the project authors. The HKUDS/AutoAgent project from the University of Hong Kong is a separate, unrelated library.

If you’re building agent systems where harness design and orchestration matter, Designing Data-Intensive Applications provides essential foundations for understanding how to design systems that can evolve and improve over time.

The views expressed in this article are my own and do not reflect those of my employer, Mercedes-Benz. I am not affiliated with any of the companies or products mentioned. This article is based on publicly reported information and independent analysis.

Comments

Loading comments...