The Claude Certified Architect Is Here — And It’s Unlike Any AI Certification Before It

Anthropic just launched its first official technical credential — a proctored, architecture-level exam that separates people who use AI from people who build production systems with it. Here’s everything you need to know and why the timing matters.

Anthropic | Certification | Career | March 2026 ~9 min read

The engineer who never certified — until now

Brij kishore Pandey has 16 years of engineering experience and no certifications. Not AWS. Not Azure. Not Google Cloud. Not PMP. Not one. He learned by building — by breaking things, shipping things, and figuring it out the hard way. He shared this publicly on LinkedIn earlier this week.

Then he said he’s going for the Claude Certified Architect. And he’s not alone.

Within 72 hours of Anthropic announcing the credential on March 12, 2026, LinkedIn threads were running hundreds of comments. Engineers who had never seriously considered a certification were reconsidering. Senior professionals from Accenture, Deloitte, and Infosys were publicly committing to it. Someone who had just passed the exam wrote: “The depth on agentic architecture, MCP tool integration, and multi-agent orchestration is no joke. This isn’t a watch-a-tutorial-and-pass certification.”

Something different is happening here. This article explains what exactly that is — what the Claude Certified Architect tests, why it matters right now, who should take it, and how to actually prepare for it.

What it is — and what it isn’t

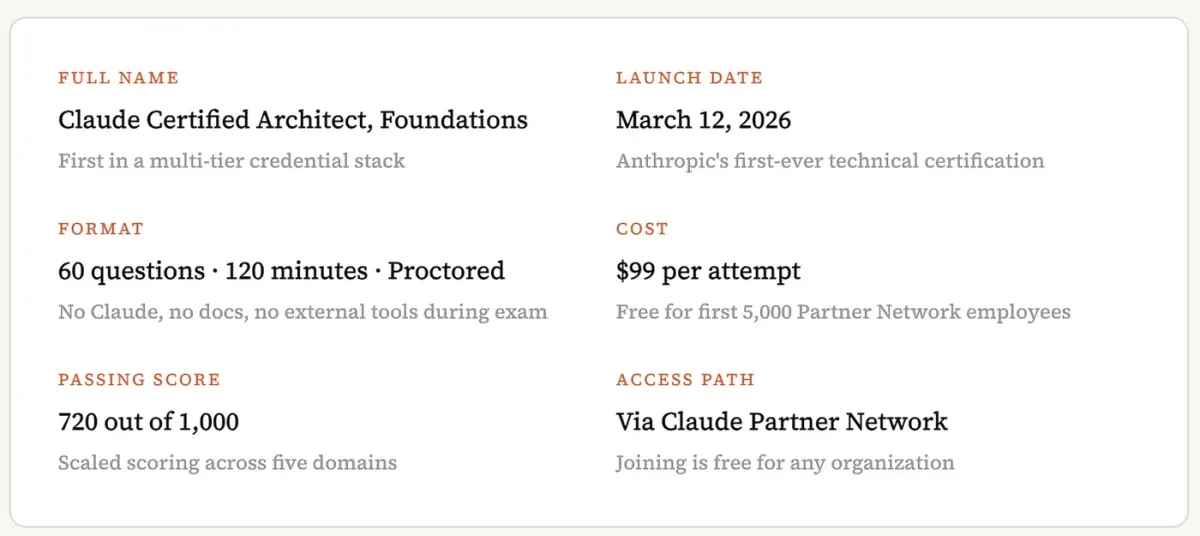

The credential is formally named the Claude Certified Architect, Foundations — the CCA. It launched March 12, 2026 as Anthropic’s first official technical certification and the entry point to a credential stack that will expand through 2026.

| Detail | Value |

|---|---|

| Full name | Claude Certified Architect, Foundations |

| Launch date | March 12, 2026 |

| Format | 60 questions, 120 minutes, proctored |

| Cost | $99 per attempt (free for first 5,000 Partner Network employees) |

| Passing score | 720 out of 1,000 (scaled across five domains) |

| Access path | Via Claude Partner Network (free for any organization to join) |

The most important thing to understand about this exam is what it is testing. It is not testing whether someone knows how to use Claude. It is not a prompting literacy badge. It does not care whether the candidate has written 10,000 prompts or zero.

It tests whether someone can architect a production-grade Claude application — design the agent loops, choose the right tool boundaries, manage context across a multi-agent system, decide when to use structured output vs. natural language, configure CI/CD pipelines for Claude Code, and handle failure gracefully when an agent misbehaves mid-task. These are systems design questions. And the exam tests them the way a technical interview at a serious engineering company would — with realistic production scenarios, tradeoffs, and wrong answers that are plausible enough to trap someone who only half understands the concept.

The exam structure

The 60 questions are anchored to 6 scenario contexts: Customer Support Resolution Agent, Code Generation with Claude Code, Multi-Agent Research System, Developer Productivity with Claude, Claude Code for CI/CD, and Structured Data Extraction. On any given exam sitting, 4 of the 6 scenarios are randomly selected. Every question is framed inside one of those production systems — candidates answer as the architect of that specific system, not in the abstract. Study all 6 scenarios. Any 4 can appear.

The five domains — broken down honestly

The exam covers five domains. Here’s what each one actually tests — including the traps that catch engineers who understand the concept but haven’t thought through the production implications.

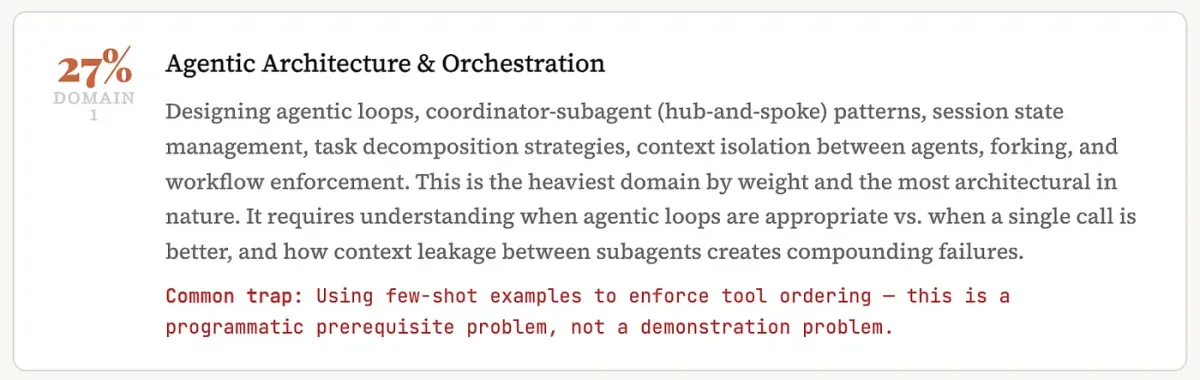

Domain 1: Agentic Architecture and Orchestration (27%)

Designing agentic loops, coordinator-subagent (hub-and-spoke) patterns, session state management, task decomposition strategies, context isolation between agents, forking, and workflow enforcement. This is the heaviest domain by weight and the most architectural in nature. It requires understanding when agentic loops are appropriate vs. when a single call is better, and how context leakage between subagents creates compounding failures.

Common trap: Using few-shot examples to enforce tool ordering — this is a programmatic prerequisite problem, not a demonstration problem.

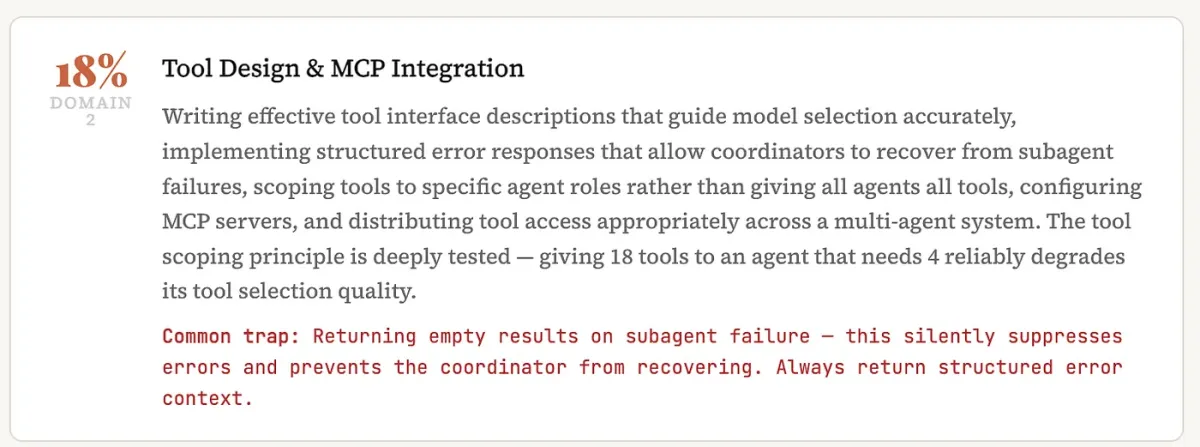

Domain 2: Tool Design and MCP Integration (18%)

Writing effective tool interface descriptions that guide model selection accurately, implementing structured error responses that allow coordinators to recover from subagent failures, scoping tools to specific agent roles rather than giving all agents all tools, configuring MCP servers, and distributing tool access appropriately across a multi-agent system. The tool scoping principle is deeply tested — giving 18 tools to an agent that needs 4 reliably degrades its tool selection quality.

Common trap: Returning empty results on subagent failure — this silently suppresses errors and prevents the coordinator from recovering. Always return structured error context.

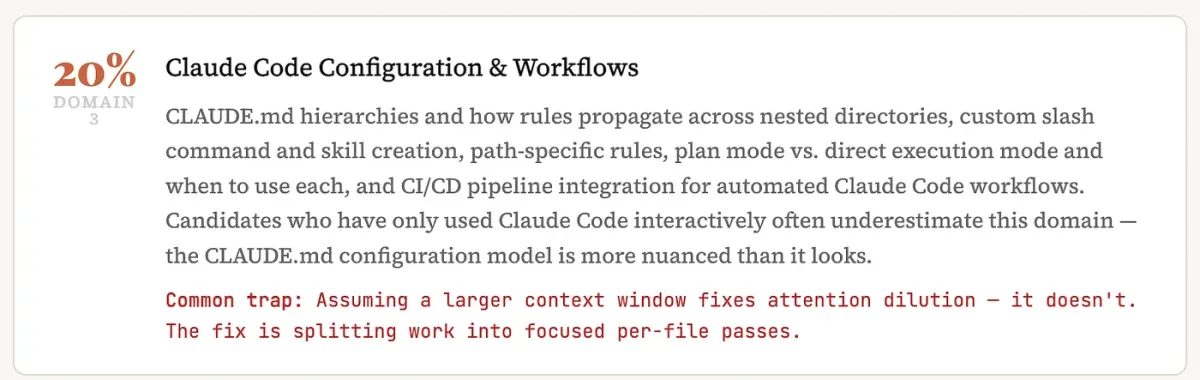

Domain 3: Claude Code Configuration and Workflows (20%)

CLAUDE.md hierarchies and how rules propagate across nested directories, custom slash command and skill creation, path-specific rules, plan mode vs. direct execution mode and when to use each, and CI/CD pipeline integration for automated Claude Code workflows. Candidates who have only used Claude Code interactively often underestimate this domain — the CLAUDE.md configuration model is more nuanced than it looks.

Common trap: Assuming a larger context window fixes attention dilution — it doesn’t. The fix is splitting work into focused per-file passes.

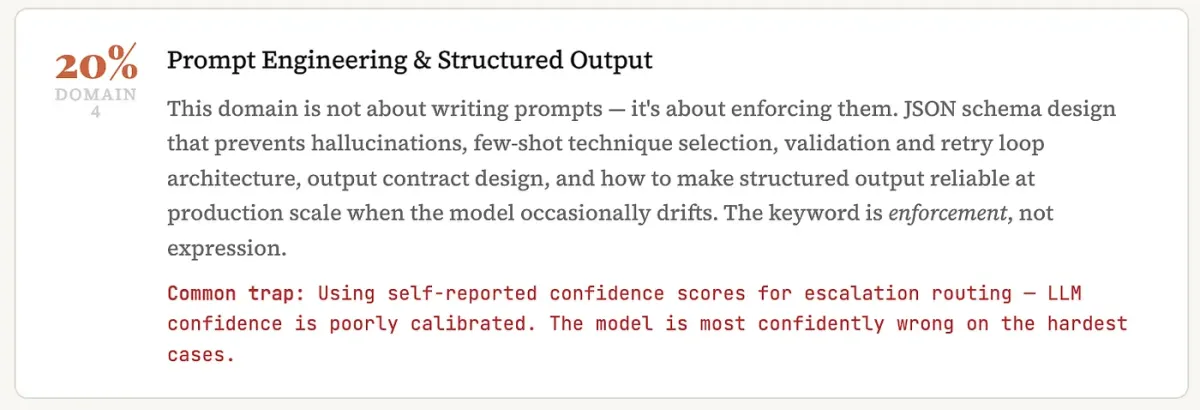

Domain 4: Prompt Engineering and Structured Output (20%)

This domain is not about writing prompts — it’s about enforcing them. JSON schema design that prevents hallucinations, few-shot technique selection, validation and retry loop architecture, output contract design, and how to make structured output reliable at production scale when the model occasionally drifts. The keyword is enforcement, not expression.

Common trap: Using self-reported confidence scores for escalation routing — LLM confidence is poorly calibrated. The model is most confidently wrong on the hardest cases.

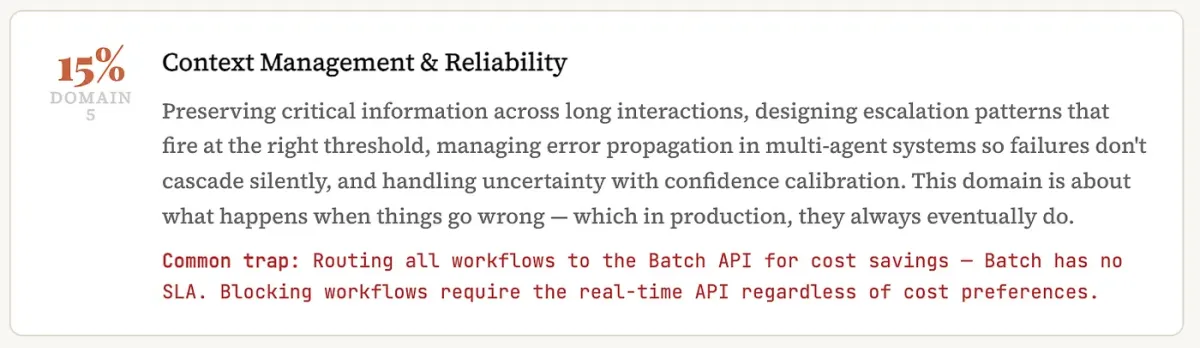

Domain 5: Context Management and Reliability (15%)

Preserving critical information across long interactions, designing escalation patterns that fire at the right threshold, managing error propagation in multi-agent systems so failures don’t cascade silently, and handling uncertainty with confidence calibration. This domain is about what happens when things go wrong — which in production, they always eventually do.

Common trap: Routing all workflows to the Batch API for cost savings — Batch has no SLA. Blocking workflows require the real-time API regardless of cost preferences.

More than half the exam (47%) sits in Domains 1 and 3 — agentic architecture and Claude Code configuration. The single most tested concept across the entire exam is programmatic enforcement vs. prompt-based guidance. When a system behavior needs to be guaranteed, the exam consistently rewards the programmatic solution over the “add it to the prompt” solution. Build your mental model around that distinction and a significant portion of the exam becomes clearer.

Why this certification landed differently

The AI certification space is crowded and mostly useless. There are dozens of “AI Practitioner” badges that can be earned in an afternoon by watching a recorded lecture. Hiring managers have been visibly ignoring them for years. The candidates who pad their resumes with these credentials are not fooling anyone who knows the field.

The CCA is different for three structural reasons.

It’s proctored. No Claude, no documentation, no external tools, no browser tabs. Sixty questions in 120 minutes under live supervision. There is no tutorial-grinding path to pass this exam. The knowledge has to be real and internalized. This is the same reason AWS certifications carry weight — the proctored format makes them resistant to credential inflation.

It tests architecture, not trivia. The questions are grounded in production scenarios. Each one presents a realistic system design problem and asks the candidate to make the right architectural call. Wrong answers are designed to be plausible — they represent the kinds of mistakes engineers actually make when they know the concepts but haven’t thought through the production implications. The exam is measuring judgment, not recall.

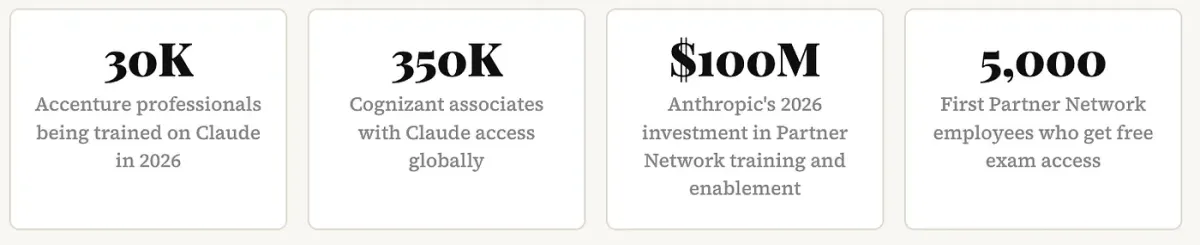

The enterprise signal is real.

| Metric | Value |

|---|---|

| Accenture professionals being trained on Claude in 2026 | 30,000 |

| Cognizant associates with Claude access globally | 350,000 |

| Anthropic’s 2026 investment in Partner Network training | $100M |

| First Partner Network employees who get free exam access | 5,000 |

At that scale of enterprise adoption, the CCA credential is on a trajectory to become the baseline expectation for Claude-focused delivery roles at major consulting firms — not a differentiator, a floor. That transition happens faster than most people expect.

“The CCA launched three days ago. Engineers who certify now establish a credibility baseline before the credential becomes table stakes. The first-mover window is real — but it closes faster than people think.”

Who should actually take this

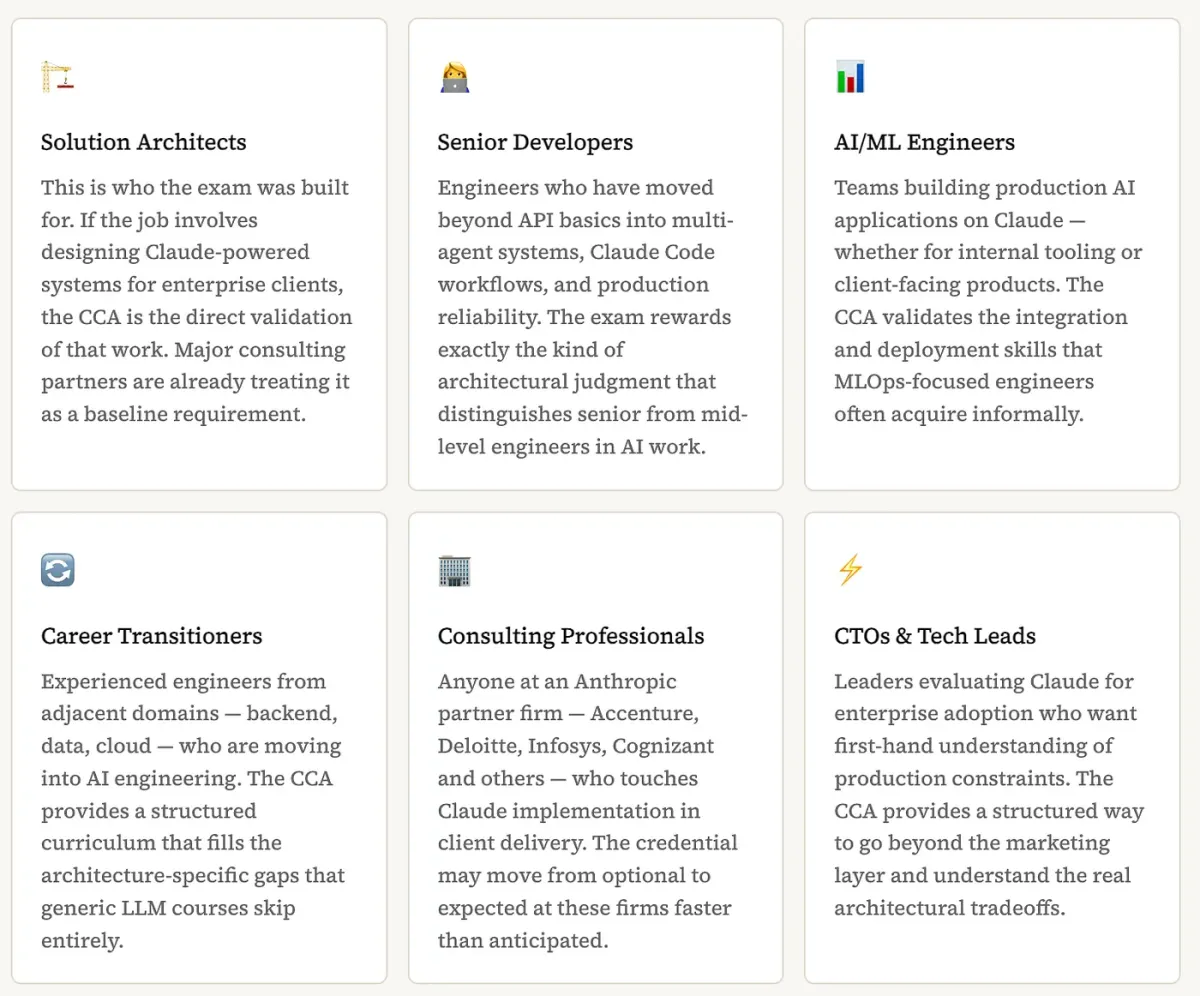

Solution Architects — This is who the exam was built for. If the job involves designing Claude-powered systems for enterprise clients, the CCA is the direct validation of that work. Major consulting partners are already treating it as a baseline requirement.

Senior Developers — Engineers who have moved beyond API basics into multi-agent systems, Claude Code workflows, and production reliability. The exam rewards exactly the kind of architectural judgment that distinguishes senior from mid-level engineers in AI work.

AI/ML Engineers — Teams building production AI applications on Claude — whether for internal tooling or client-facing products. The CCA validates the integration and deployment skills that MLOps-focused engineers often acquire informally.

Career Transitioners — Experienced engineers from adjacent domains — backend, data, cloud — who are moving into AI engineering. The CCA provides a structured curriculum that fills the architecture-specific gaps that generic LLM courses skip entirely.

Consulting Professionals — Anyone at an Anthropic partner firm — Accenture, Deloitte, Infosys, Cognizant and others — who touches Claude implementation in client delivery. The credential may move from optional to expected at these firms faster than anticipated.

CTOs and Tech Leads — Leaders evaluating Claude for enterprise adoption who want first-hand understanding of production constraints. The CCA provides a structured way to go beyond the marketing layer and understand the real architectural tradeoffs.

Who should wait

The official candidate profile is someone with at least 6 months of hands-on production experience with the Claude API, Claude Code, and MCP. If the current experience level is mostly Claude.ai chat usage and basic API calls, the free Anthropic Academy courses will be more valuable than the exam right now. Build with the platform first — the certification validates real experience, it doesn’t create it.

Myths vs. reality

| Myth | Reality |

|---|---|

| ”The prep courses are enough. Watch all 13 and you’ll pass.” | The courses build foundational knowledge. The exam tests architectural judgment under production scenario pressure. Candidates who only watch courses fail. Hands-on building is the differentiator. |

| ”It’s just a vendor certification — employers won’t care.” | AWS certs were “just vendor certs” in 2013. By 2016 they were required for cloud roles. The CCA’s enterprise backing — and Anthropic’s $100M partner investment — is the same structural play. |

| ”The prep is free so it must be easy.” | The learning materials are free. The exam is proctored with no external resources. A passing score of 720/1,000 with scenario-anchored production questions makes this a genuinely rigorous credential. |

| ”I’ll wait until more certifications are available before I start.” | The Foundations tier is the prerequisite for advanced tiers. Partners who certify now get priority access to new credentials as they roll out. Waiting means starting behind candidates who moved in week one. |

How to prepare: the full study path

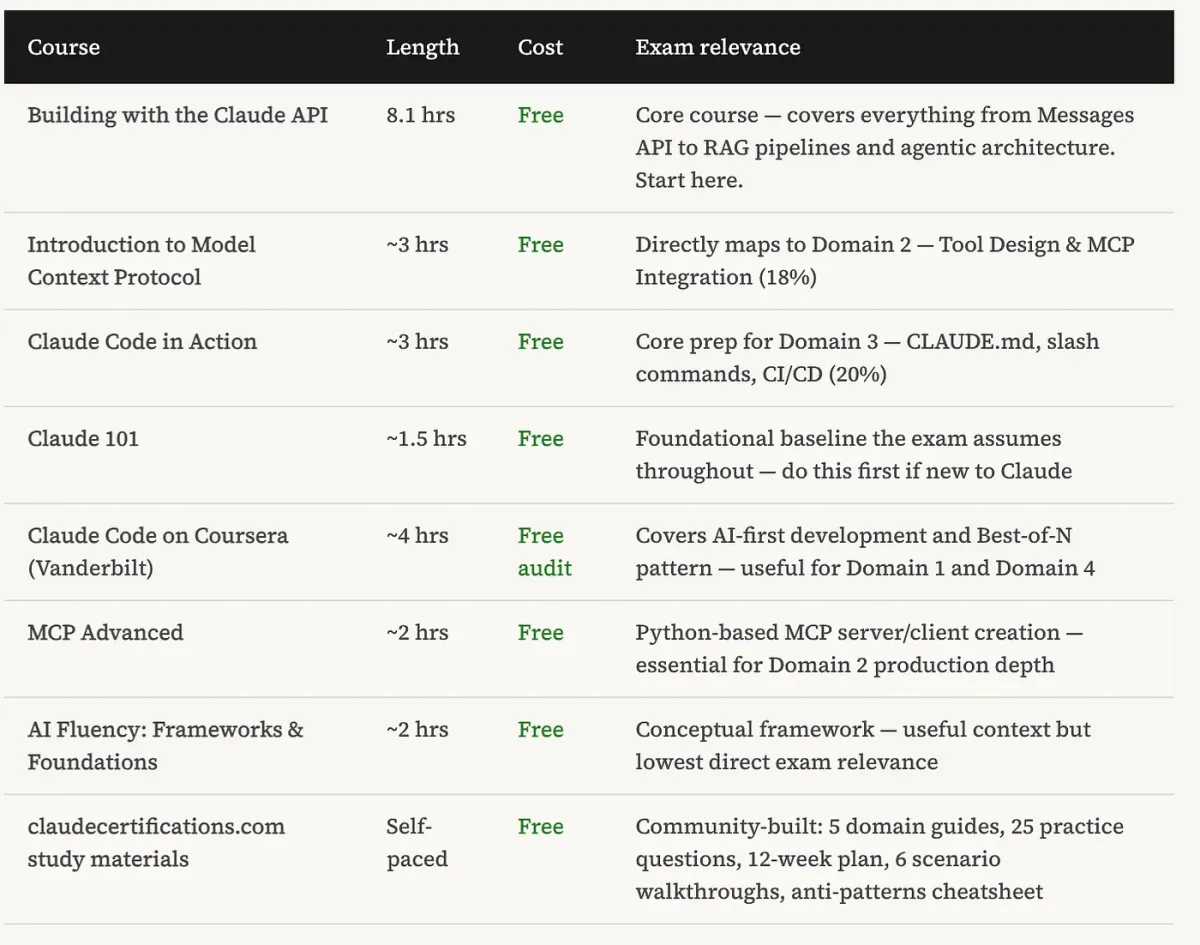

Anthropic Academy launched on March 2, 2026 — ten days before the exam — with 13 free self-paced courses hosted on Skilljar. No subscription, no paywall. These are the official preparation materials and they’re genuinely comprehensive.

| Course | Length | Cost | Exam Relevance |

|---|---|---|---|

| Building with the Claude API | 8.1 hrs | Free | Core course — covers everything from Messages API to RAG pipelines and agentic architecture. Start here. |

| Introduction to Model Context Protocol | ~3 hrs | Free | Directly maps to Domain 2 — Tool Design and MCP Integration (18%) |

| Claude Code in Action | ~3 hrs | Free | Core prep for Domain 3 — CLAUDE.md, slash commands, CI/CD (20%) |

| Claude 101 | ~1.5 hrs | Free | Foundational baseline the exam assumes throughout — do this first if new to Claude |

| Claude Code on Coursera (Vanderbilt) | ~4 hrs | Free audit | Covers AI-first development and Best-of-N pattern — useful for Domain 1 and Domain 4 |

| MCP Advanced | ~2 hrs | Free | Python-based MCP server/client creation — essential for Domain 2 production depth |

| AI Fluency: Frameworks and Foundations | ~2 hrs | Free | Conceptual framework — useful context but lowest direct exam relevance |

| claudecertifications.com study materials | Self-paced | Free | Community-built: 5 domain guides, 25 practice questions, 12-week plan, 6 scenario walkthroughs, anti-patterns cheatsheet |

Recommended study sequence

Week 1-2: Claude 101 + AI Fluency (baseline knowledge)

Week 3-5: Building with the Claude API in full (8.1 hours — the core material)

Week 6-7: Intro to MCP + MCP Advanced (Domain 2)

Week 8-9: Claude Code in Action + Coursera module (Domain 3)

Week 10-11: Practice questions + scenario walkthroughs on all 6 exam scenarios

Week 12: Anti-patterns review — study the common traps, not more new material

The anti-patterns cheatsheet worth memorizing

Community members who have sat the exam identified a consistent pattern: many wrong answers are not random — they represent architectural anti-patterns that engineers commonly reach for before understanding the production implications. Knowing what not to do is half the exam.

ANTI-PATTERN 1: Prompt-based ordering enforcement

Wrong: "Add few-shot examples to enforce tool call ordering"

Right: Use programmatic prerequisites -- ordering is a

compliance constraint, not a style preference

ANTI-PATTERN 2: Self-reported confidence for escalation

Wrong: "Route to human when model reports low confidence"

Right: Use deterministic threshold logic -- LLM confidence

is poorly calibrated and fails on the hardest cases

ANTI-PATTERN 3: Batch API for blocking workflows

Wrong: "Route all workflows to Batch API to reduce costs"

Right: Batch has no SLA -- blocking user-facing workflows

require the real-time API regardless of cost

ANTI-PATTERN 4: Larger context window = better attention

Wrong: "Increase context window to reduce attention dilution"

Right: Split into focused per-file passes -- window size

does not solve attention distribution problems

ANTI-PATTERN 5: Silent failure on subagent error

Wrong: "Return empty results when a subagent fails"

Right: Return structured error context -- silent failures

prevent the coordinator from recovering correctly

ANTI-PATTERN 6: All tools to all agents

Wrong: "Give every agent access to the full tool set"

Right: Scope tools to each agent's role -- 18 tools

degrades selection reliability vs 4-5 focused tools

ANTI-PATTERN 7: Prompt-only output enforcement

Wrong: "Instruct the model to always return valid JSON"

Right: Use JSON schema validation + retry loop -- prompts

alone cannot guarantee output contract complianceThe bigger picture: what this credential stack becomes

The CCA Foundations is explicitly labeled as the first tier of a multi-level program. Anthropic has confirmed additional certifications for sellers, advanced architects, and developers are planned for later in 2026. Partners who hold the Foundations credential get priority access to each new tier as it launches.

The trajectory here parallels what AWS did with cloud certifications between 2013 and 2018. The credentials started as optional differentiators, became preferred qualifications on job postings, and then quietly became required qualifications at major firms and government contracts. That process took about five years for AWS. Given the pace of enterprise AI adoption in 2026 — and the $100M partner investment behind this program — the Claude credential stack is likely to compress that timeline significantly.

For individuals: the first-mover advantage on a credential that will become widespread is real and time-limited. The engineers who certify in March 2026 will have two years of demonstrated commitment to the platform by the time the credential is table stakes. That signal compounds in ways that certifying in 2028 simply cannot replicate.

For organizations: requiring CCA certification from architects working on Claude implementations is now a concrete way to separate practitioners from people who have read the documentation. For CTOs evaluating vendor partners or building internal Claude competency, the credential provides an objective baseline that previously required a custom technical assessment to establish.

The bottom line

The exam is live. The prep materials are free. The first-mover window is open.

Register via Anthropic Academy

It’s not that the credential guarantees competence — no exam does that. It’s that the preparation process for the exam forces architects to work through the exact problems that production Claude systems fail on: agent loop design, context isolation, structured output enforcement, tool scoping, failure recovery. The engineers who go through that preparation come out better at their actual jobs, not just better at the exam. The credential is the evidence of the learning. The learning is the thing that matters.

At $99 for the exam and zero for the preparation materials, it is the most accessible serious AI architecture credential that has ever existed. The window where taking it early means something distinct will close. It’s open right now.

If you want to build the foundational systems knowledge that production AI architecture demands — distributed systems, data pipelines, fault tolerance, and the engineering patterns that make reliable infrastructure work — Designing Data-Intensive Applications by Martin Kleppmann covers the principles that directly underpin what the CCA tests.

Disclaimer: This article is based on Anthropic’s official CCA announcement, Anthropic Academy materials, and community reports from exam takers as of March 2026. The author has no affiliation with Anthropic beyond being a user of Claude and a CCA candidate. Exam content, structure, and pricing may change. Enterprise partnership figures (Accenture 30,000, Cognizant 350,000) are from publicly reported announcements. The anti-patterns listed are from community analysis and may not represent every exam sitting. This article contains affiliate links — purchasing through them supports this blog at no extra cost to you.

Comments

Loading comments...