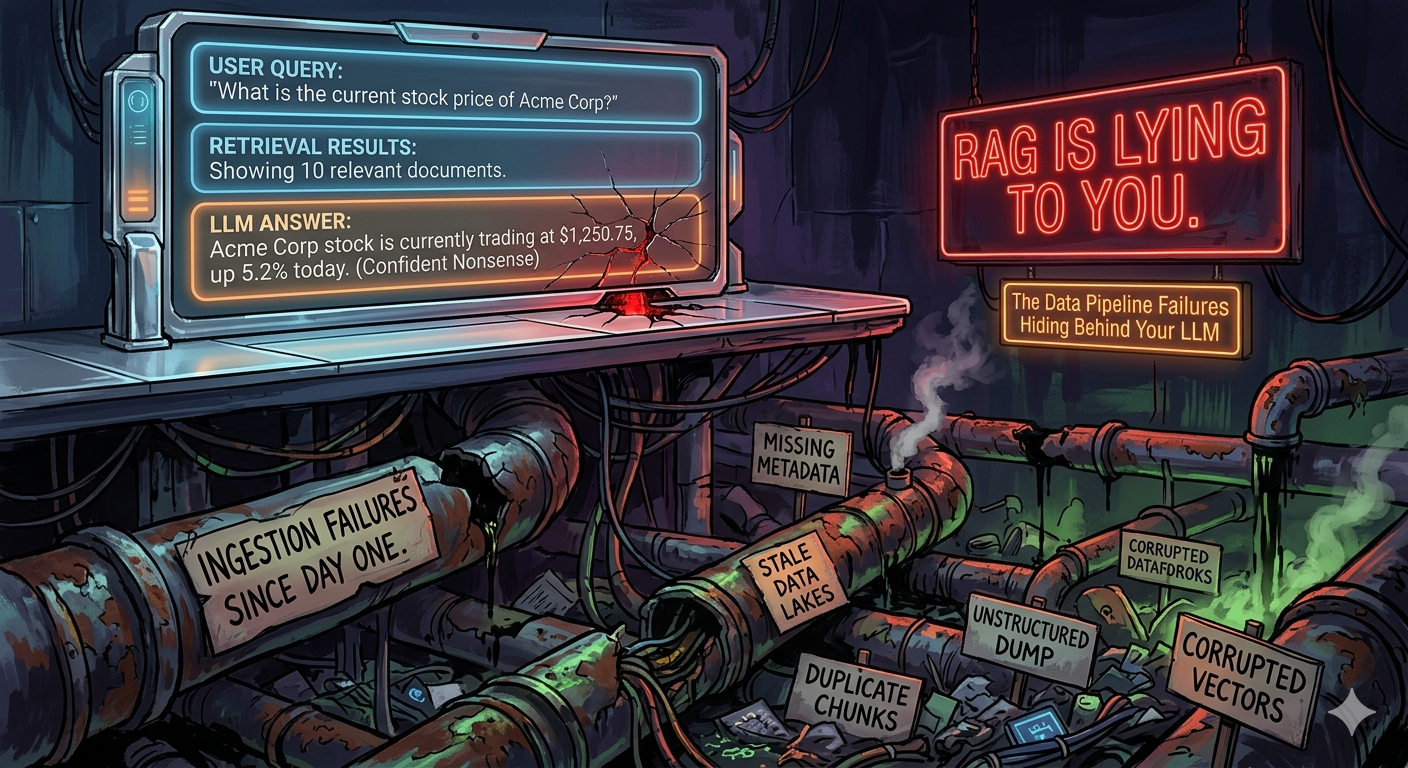

RAG Is Lying to You: The Data Pipeline Failures Hiding Behind Your LLM

Your retrieval returns results. Your LLM generates an answer. Your users get confident nonsense. This isn’t an AI problem — it’s a data engineering problem, and it’s been there since ingestion day one.

Data Engineering | RAG Production Failures | Chunking | Embedding Drift | Debugging | April 2026 ~16 min read

The failures that return results and say nothing

A RAG system that is failing in production will rarely tell you it’s failing. The vector search returns results. The similarity scores look fine. The LLM produces fluent, confident prose. And somewhere inside that prose is a policy from 18 months ago, a document chunk that lost its heading when you split it, or a vector that was computed against a slightly different preprocessing pipeline than the one running today.

The uncomfortable truth, backed by multiple independent studies, is that the majority of production RAG failures trace back to the ingestion pipeline — not the LLM, not the retrieval algorithm, not the prompt. A 2025 CDC policy RAG study found 80% of RAG failures trace back to chunking decisions alone. A peer-reviewed study from Vectara presented at NAACL 2025 found that chunking configuration had as much or more influence on retrieval quality as the choice of embedding model.

Data engineers who have built reliable pipelines for decades are in a better position to fix this than most ML practitioners. The failure modes are not exotic. They are stale data, broken pipelines, schema drift, inconsistent backfills, and missing observability. RAG is a data engineering problem wearing an AI costume.

| Metric | Value | Source |

|---|---|---|

| RAG failures traced to chunking decisions | 80% | 2025 CDC policy study |

| Recall gap between best and worst chunking on same corpus | 9% | Chroma evaluation |

| Recall after embedding drift (silent, no alerts) | 0.74 (down from 0.92) | Production observation |

This article covers five failure modes in depth, with concrete diagnostics and fixes. There’s no fluff about “prompt engineering” here. This is about the pipeline.

The failure map

| # | Layer | Failure Mode | Severity |

|---|---|---|---|

| 01 | Ingestion | Chunking destroys semantic context — answers hallucinated because retrieved chunk is semantically orphaned | CRITICAL |

| 02 | Embeddings | Embedding drift — mixed model versions or preprocessing changes silently degrade recall over months | CRITICAL |

| 03 | Metadata | Metadata staleness — filters return outdated or deleted documents; freshness is not enforced | CRITICAL |

| 04 | Operations | Re-indexing economics — naive full-corpus reindexing blocks pipeline, explodes costs, misses SLAs | HIGH |

| 05 | Debugging | Ingestion vs retrieval confusion — engineers blame the LLM when the failure is 3 steps upstream | HIGH |

Failure 01: Chunking destroys the context your retriever needs

Fixed-size chunking at 512 tokens is the default in almost every RAG tutorial. It is also the single most reliable way to ensure your retriever surfaces chunks that contain the right words but lack the semantic context to answer the question.

The failure is structural. When you split at a fixed token boundary, you routinely separate a question from its answer, a claim from its supporting evidence, a table heading from its data, a procedural step from its prerequisite. The chunk retrieves cleanly — high cosine similarity — but the LLM gets half the context it needs. It fills the gap by hallucinating.

The benchmark evidence here is sharper than most practitioners realise. Chroma’s evaluation found semantic chunking produced 91.9% recall. Sounds great. FloTorch’s test on the same technique found 54% end-to-end accuracy — 15 points behind recursive splitting. The difference: FloTorch’s semantic chunks averaged 43 tokens, which retrieved well in isolation but gave the LLM too little context to actually answer questions. High retrieval recall, wrong answer. A chunk that retrieves cleanly but answers badly is still a broken chunk.

Semantic chunking scored 91.9% recall but 54% end-to-end accuracy. The chunks retrieved cleanly and answered nothing. Retrieval-only benchmarks lie to you.

The Vectara NAACL 2025 peer-reviewed study (arXiv:2410.13070), which tested 25 chunking configurations with 48 embedding models, found that on realistic document sets, fixed-size chunking consistently outperformed semantic chunking. The most common mistake is defaulting to semantic chunking because it sounds more principled.

What actually works

Start with recursive character splitting at 512 tokens with 10–20% overlap, then measure. The overlap is non-negotiable — even 50–100 tokens of overlap recovers context that would otherwise be lost at boundaries. Microsoft Azure’s guidance recommends 25% overlap (128 tokens) as a conservative production starting point.

For documents with meaningful structure — legal contracts, technical manuals, API documentation — hierarchical chunking preserves semantic units better than any fixed-size approach:

from langchain.text_splitter import RecursiveCharacterTextSplitter

import hashlib

def chunk_document(

text: str,

doc_id: str,

schema_version: str = "v3",

chunk_size: int = 512,

overlap: int = 102, # ~20% of 512

embedding_model: str = "text-embedding-3-large",

) -> list[dict]:

"""

Chunk with provenance. Every chunk carries the metadata

needed to detect drift and perform selective reindexing later.

"""

splitter = RecursiveCharacterTextSplitter(

chunk_size=chunk_size,

chunk_overlap=overlap,

# Prefer splitting at semantically meaningful boundaries

separators=["\n\n", "\n", ". ", " ", ""],

length_function=len,

)

raw_chunks = splitter.split_text(text)

chunks = []

for i, chunk_text in enumerate(raw_chunks):

# Hash the chunk text + config — detects preprocessing drift

pipeline_hash = hashlib.sha256(

f"{chunk_text}|{chunk_size}|{overlap}|{schema_version}"

.encode()

).hexdigest()[:16]

chunks.append({

"text": chunk_text,

"doc_id": doc_id,

"chunk_index": i,

"total_chunks": len(raw_chunks),

"schema_version": schema_version,

"embedding_model": embedding_model,

"chunk_size_cfg": chunk_size,

"overlap_cfg": overlap,

"pipeline_hash": pipeline_hash,

"char_count": len(chunk_text),

"token_estimate": len(chunk_text) // 4,

})

return chunksTwo patterns worth adding once you have the basics working. Late chunking — embed the full document before splitting, so each chunk’s vector carries context from the surrounding document. Anthropic reported 49% fewer retrieval failures with contextual enrichment (adding surrounding context to each chunk before embedding). Parent document retrieval — index small child chunks for precise matching, but return the larger parent document to the LLM. Small chunks retrieve well; large chunks answer well. Use both.

Notice the

schema_versionfield in the chunk above. This is how you manage chunking strategy changes without nuking your entire vector store. When you change chunk size, overlap, or separators, bump the schema version. This lets you query across versions, run A/B retrieval tests, and roll back — the same way a feature store manages feature versions. Without it, you discover schema drift the hard way.

Failure 02: Embedding drift — the failure that returns results and says nothing

Embedding drift is the cruelest RAG failure. The system keeps returning results. Similarity scores still look reasonable. Recall drops from 0.92 to 0.74 over six months and there is nothing in your logs that explains why. Users start complaining that the answers “feel off.” You look at the LLM. The LLM is fine. The problem is that the geometry of your vector space has been quietly corrupted.

There are four distinct causes, and they’re worth naming precisely because each requires a different fix:

| Drift Cause | How It Happens | Symptom | Fix |

|---|---|---|---|

| Partial reindex | 20% of corpus re-embedded after a doc update. Mixed vectors in the same index. | Previously top-2 document now ranks #8 with no relevance change (silent) | Never partially reindex. Reindex entire corpus atomically. |

| Preprocessing delta | Bug fix in HTML stripper. Unicode normalisation added. Chunk window changed 512->480. | Same source doc, same model, structurally different vectors. | Track preprocessing as code. Pin versions. Any change = full reindex. |

| Model version change | New docs use text-embedding-3-small; old docs on ada-002. Spaces are not comparable. | Mixed-model store. v3 queries cannot reliably find v2 documents. | Never mix model versions. One version per namespace/collection. |

| Query distribution shift | Corpus indexed in Q1. Q3 brings new products, regulations, domain language. | Average similarity scores of top-1 result drift downward over weeks. | Monitor avg top-1 similarity weekly. Re-embed cold data quarterly. |

The partial reindex case is the most common in practice. A team updates 20% of their document corpus — maybe a new data source backfill or updated policy documents. Even using the same model version, small differences in preprocessing or floating-point non-determinism during batching put vectors in slightly different regions of the embedding space. A document that ranked second last week might now rank eighth, not because it became less relevant, but because the geometry around it shifted.

Detecting drift before it destroys recall

Detection requires baseline metrics you need to establish before drift begins. Once you’re trying to detect drift retroactively, you’ve already lost the comparison point.

import numpy as np

from datetime import datetime, timedelta

class EmbeddingDriftMonitor:

"""

Detect embedding drift by comparing stored vectors against

freshly computed vectors on a sample of benchmark documents.

Run weekly as a scheduled job. Alert if cosine similarity

drops below the threshold you establish at index creation time.

"""

def __init__(self, embed_fn, vector_store, alert_threshold=0.97):

self.embed_fn = embed_fn

self.vector_store = vector_store

self.threshold = alert_threshold # tune per model; ~0.97 for ada-002

def sample_and_check(self, doc_ids: list[str], n_sample: int = 100) -> dict:

# Pull stored vectors for a sample of known documents

sample_ids = np.random.choice(doc_ids, n_sample, replace=False)

results = []

for doc_id in sample_ids:

stored = self.vector_store.get_vector(doc_id)

if stored is None: continue

# Re-embed with the current pipeline

fresh_text = self.vector_store.get_source_text(doc_id)

fresh_vec = self.embed_fn(fresh_text)

stored_vec = np.array(stored["vector"])

# Cosine similarity between stored and fresh embedding

cos_sim = np.dot(stored_vec, fresh_vec) / (

np.linalg.norm(stored_vec) * np.linalg.norm(fresh_vec)

)

results.append({

"doc_id": doc_id,

"cosine_sim": round(cos_sim, 4),

"model_stored": stored["embedding_model"],

"pipeline_hash_stored": stored["pipeline_hash"],

"drifted": cos_sim < self.threshold,

})

mean_sim = np.mean([r["cosine_sim"] for r in results])

drift_pct = sum(1 for r in results if r["drifted"]) / len(results) * 100

return {

"mean_cosine_similarity": mean_sim,

"pct_drifted": drift_pct,

"alert": drift_pct > 5, # >5% drifted = investigate

"sample_results": results,

"checked_at": datetime.utcnow().isoformat(),

}Vectors from

text-embedding-ada-002andtext-embedding-3-smallare not in the same vector space. You cannot compare them with cosine similarity. This is obvious in theory and surprisingly common in practice — one team upgrades the embedding model for new documents while old documents stay on the previous version. The result: a mixed-model vector store where a v3 query systematically fails to surface v2 documents that are directly relevant. The fix is an index namespace per model version and a migration strategy, not a “we’ll fix it later” comment in the PR.

Failure 03: Metadata staleness — your filter trusts a lie

The metadata problem is what happens when data engineers build a RAG pipeline as if documents were static. They aren’t. Policies get updated. Products get discontinued. Legal terms change. Records get deleted for compliance reasons. In a traditional data pipeline, you’d handle this with CDC, partition pruning, or surrogate key management. In most RAG pipelines, nobody handles it at all.

The failure surface is wide. A retrieval filter that says doc_type == "current_policy" returns stale documents because the pipeline that updates doc_type metadata on policy revisions failed silently three months ago. A freshness filter based on updated_at returns documents that appear fresh in metadata but haven’t had their embeddings regenerated after a content change. A deletion event in the source system never propagated to the vector store, so deleted documents keep showing up in retrieval results.

The framing that makes this tractable is: treat embeddings as derived data, not artifacts. Derived data in any mature pipeline has a data contract, a freshness SLA, a versioning scheme, and a lineage trail. RAG systems should be no different.

The metadata schema every chunk needs

At ingestion time, every chunk in your vector store needs enough provenance metadata to answer three questions at query time: Is this document still valid? Was it processed with the current pipeline? And if I retrieve it, can I trace it back to the source record for debugging?

from dataclasses import dataclass

from datetime import datetime

from typing import Optional

@dataclass

class ChunkMetadata:

# Source provenance

doc_id: str # stable ID in source system

doc_version: str # source document version/hash

source_updated_at: datetime # when the source record last changed

source_url: str # for audit trail

# Content classification

doc_type: str # 'policy', 'faq', 'product', 'legal', etc.

doc_status: str # 'current', 'deprecated', 'draft'

valid_from: datetime # when this version became authoritative

valid_until: Optional[datetime] # None = no expiry

# Pipeline provenance

embedding_model: str # 'text-embedding-3-large-v1'

schema_version: str # chunking config version

pipeline_hash: str # hash of preprocessing config

indexed_at: datetime # when this embedding was written

# Chunk coordinates

chunk_index: int # position within document

total_chunks: int # total chunks for this doc version

# Access control

tenant_id: Optional[str] # for multi-tenant filtering

classification: str = "internal" # 'public', 'internal', 'confidential'With this metadata in place, your retrieval layer can enforce freshness as a first-class constraint rather than hoping the pipeline kept things up to date.

Once your source data is dynamic, treat the embedding pipeline as a CDC consumer. Changes in your source system (policy update, document deletion, status change) should emit events that trigger selective re-embedding, not be discovered weeks later when a user reports wrong answers. AWS DMS, Debezium, or logical replication from your primary database are all valid CDC sources. The embedding pipeline then processes only the changed rows — same pattern as any incremental derived table in your data warehouse.

Failure 04: Re-indexing economics — why full reindexes are killing your pipeline

At some point every RAG system crosses the threshold where the obvious fix — delete everything and reindex from scratch — becomes unaffordable. The naive approach has three failure modes: it’s expensive (embedding costs multiply with corpus size), it’s slow (pipeline SLAs break during the rebuild), and it’s all-or-nothing (a partial failure mid-reindex leaves you in an inconsistent state worse than the one you started with).

The embedding cost math is concrete. At OpenAI’s current pricing of $0.13 per million tokens, a corpus of 10 million documents at 500 tokens each costs $650 to fully reindex. Every full reindex. Voyage AI’s pricing is $0.06 per million tokens — still $300 per full reindex at that scale. If you’re doing this weekly because your document corpus is volatile, you’ve designed yourself into a recurring cost problem.

The right architecture treats embeddings as incrementally updated derived data — the same pattern you’d use for any derived table that needs to stay current without full recomputation.

The incremental indexing contract

import hashlib

from dataclasses import dataclass

from datetime import datetime

from typing import Literal

ChangeType = Literal["insert", "update", "delete", "schema_change"]

@dataclass

class IndexLedgerEntry:

"""

One row per document in the index ledger.

This is the source of truth for what's been indexed and when.

Store in a relational DB (Postgres, SQLite) alongside your vector store.

"""

doc_id: str

content_hash: str # SHA-256 of source content

pipeline_hash: str # SHA-256 of chunking + preprocessing config

embedding_model: str

schema_version: str

chunk_ids: list[str]

indexed_at: datetime

status: str # 'current', 'stale', 'deleted'

def compute_content_hash(text: str) -> str:

return hashlib.sha256(text.encode()).hexdigest()

def sync_document(

doc_id: str,

source_text: str,

pipeline_hash: str,

ledger, # IndexLedger instance

vector_store,

embed_fn,

chunk_fn,

) -> ChangeType:

"""

Sync one document. Only touches the vector store if something changed.

Returns the type of change made (or None if no-op).

"""

content_hash = compute_content_hash(source_text)

existing = ledger.get(doc_id)

# No-op: same content, same pipeline

if (existing

and existing.content_hash == content_hash

and existing.pipeline_hash == pipeline_hash):

return None # nothing to do

# Delete stale chunks from vector store

if existing:

vector_store.delete(ids=existing.chunk_ids)

# Re-chunk, re-embed, upsert

chunks = chunk_fn(source_text, doc_id)

embeddings = embed_fn([c["text"] for c in chunks])

chunk_ids = vector_store.upsert(chunks, embeddings)

# Update ledger

ledger.upsert(IndexLedgerEntry(

doc_id=doc_id,

content_hash=content_hash,

pipeline_hash=pipeline_hash,

chunk_ids=chunk_ids,

indexed_at=datetime.utcnow(),

status="current",

))

change_type = "update" if existing else "insert"

return change_typeThe ledger pattern means every sync run only processes documents where either content or pipeline configuration actually changed. For a 1 million document corpus where 0.5% of documents change daily, this reduces your daily embedding cost by roughly 99.5%. The ledger itself is a small relational table — trivially cheap to store and query.

When full reindexing is unavoidable

Two events require full reindexing and there is no shortcut: changing the embedding model (all vector spaces are incompatible across model versions), and changing chunking configuration in a way that changes the semantic content of chunks (chunk size change, different separators, adding or removing overlap). The right approach for full reindexes is a blue/green pattern — build the new index in a separate namespace while the current index serves traffic, validate quality metrics against your benchmark queries, then atomically cut over.

Your vector index type affects how well it handles incremental updates. HNSW graphs support dynamic node insertion reasonably well — good for frequent small updates. IVF indexes require periodic retraining to adapt to shifting data distributions, which adds latency overhead as your corpus grows. For most production RAG systems with volatile corpora, HNSW is the safer default. For read-heavy, write-rare corpora at massive scale, IVF with periodic retraining is more efficient.

Failure 05: Ingestion layer vs retrieval layer — finding where the pipeline actually broke

When a RAG system gives a wrong answer, the instinct is to blame the LLM. Sometimes that’s right. More often, the failure is three steps upstream and the LLM is just faithfully working with bad retrieved context. Debugging RAG requires a systematic layer-isolation approach — you cannot determine the root cause without testing each layer independently.

The diagnostic queries

Run these in order. Each narrows the failure to a specific layer.

-

Does the source document exist and is it current? Query your source system. Verify the document exists, has the expected content, and was last modified when you think it was. If it was modified after your last index run and the vector store doesn’t reflect it — metadata staleness confirmed.

-

Is the document in the vector store at all? Query by

doc_idmetadata filter, not by vector similarity.vector_store.get(where={"doc_id": target_id}). If it’s missing entirely, the ingestion job failed silently. If it’s present butindexed_atis stale, the update pipeline isn’t running. -

Do the chunks contain the answer text? For each chunk returned by step 2, manually inspect whether the relevant content is actually inside the chunk. If the answer spans a chunk boundary — this is a chunking failure. Adjust

chunk_size,overlap, or separator strategy. -

Does vector similarity actually surface the right chunks? Embed the query and manually compute cosine similarity against the chunks you found in step 3. If similarity is low (<0.6) on chunks that clearly contain the answer, you have an embedding model mismatch or the pipeline_hash differs between the query encoder and the stored vectors.

-

Is the metadata filter eliminating the right chunks? Re-run retrieval with no metadata filter. If the relevant chunks now appear, your

doc_status,valid_until, ordoc_typefilter is wrong — the document is marked stale or deprecated when it shouldn’t be, or the filter is misconfigured. -

Does the LLM produce the right answer with the right context? Only once steps 1–5 confirm that retrieval is surfacing good chunks, test the LLM in isolation by manually injecting those chunks as context. If the answer is still wrong now, this is a generation failure — a different debugging track entirely.

Logging every layer, every query

The diagnostics above only work if you have the data to run them. That means logging the entire pipeline for every query in production, not just errors:

from dataclasses import dataclass, field

from datetime import datetime

from typing import Any

import uuid

@dataclass

class RAGQueryTrace:

"""

One row per user query in your rag_query_traces table.

Partition by date. Query this to debug failures and track drift.

This is your observability table — treat it like production infrastructure.

"""

trace_id: str = field(default_factory=lambda: str(uuid.uuid4()))

query_text: str = ""

query_embedding_model:str = ""

# Retrieval outcome

retrieved_chunk_ids: list[str] = field(default_factory=list)

retrieved_doc_ids: list[str] = field(default_factory=list)

top1_similarity: float = 0.0

top1_doc_version: str = ""

top1_indexed_at: str = ""

filter_applied: dict = field(default_factory=dict)

chunks_before_filter: int = 0

chunks_after_filter: int = 0

# LLM generation outcome

answer_text: str = ""

llm_model: str = ""

input_tokens: int = 0

output_tokens: int = 0

latency_ms: int = 0

# Quality signals (populated async by eval job)

eval_faithfulness: float = None # did answer stay grounded in context?

eval_relevance: float = None # did context match the question?

user_thumbs_down: bool = False # explicit negative signal

recorded_at: str = field(

default_factory=lambda: datetime.utcnow().isoformat()

)With this table populated, your debugging process becomes a SQL query. “Show me all queries where top1_similarity < 0.65 in the last 30 days” isolates retrieval failures. “Show me queries where top1_doc_version is more than 60 days old” flags metadata staleness. “Show me queries where chunks_before_filter != chunks_after_filter and chunks_after_filter == 0” finds cases where your metadata filter eliminated everything and the LLM had no context at all.

The pipeline contract that makes this all work

Every failure mode in this article shares a common root: the RAG ingestion pipeline was built without contracts between producers and consumers. Source systems change documents without notifying the embedding pipeline. Preprocessing logic evolves without triggering reindexing. Metadata filters get configured once and never validated. Chunks get written to the vector store with no provenance, so debugging requires guesswork.

The contract that fixes this has four components that data engineers already know how to build:

- Provenance on every chunk.

doc_id,doc_version,embedding_model,pipeline_hash,schema_version,indexed_at. Non-negotiable. Without these, debugging is archaeology. - A ledger for incremental indexing. A relational table that tracks

content_hashandpipeline_hashper document. Only re-embed what changed. Treat the embedding as derived data, not a snapshot artifact. - Freshness enforcement at retrieval time. Filters on

valid_until,doc_status, andsource_updated_atbuilt into every query. The retriever should refuse to surface stale content, not leave that to the LLM to sort out. - A query traces table. One row per query, every query.

top1_similarity,retrieved_doc_ids,chunks_before_filter, answer text, latency, optional eval scores. This is how you know when the pipeline is degrading before users tell you.

None of this is glamorous. It’s the same work that makes data warehouses reliable: lineage, freshness contracts, observability, and incremental updates. The LLM layer of a RAG system gets the most attention because it’s the most visible. The pipeline that feeds it gets the least attention because it’s invisible until it breaks.

The teams that build reliable RAG systems in production aren’t the ones who chose the best embedding model. They’re the ones who treated the ingestion pipeline with the same engineering rigour they’d apply to any other production data system.

Further reading

- Vectara NAACL 2025 chunking study (arXiv:2410.13070)

- PremAI RAG Chunking 2026 Benchmark

- Decompressed: Detecting Embedding Drift

- RAG Is a Data Engineering Problem (DEV.to)

If you want the foundational patterns for building reliable data pipelines — the kind of engineering rigour that makes RAG systems actually work in production — this is the book:

Fundamentals of Data Engineering by Joe Reis & Matt Housley — the comprehensive guide to the data lifecycle, pipeline design, lineage, and observability patterns that every data engineer building production RAG should understand.

Disclaimer: This article synthesises findings from published research including the Vectara NAACL 2025 study (arXiv:2410.13070), Chroma’s July 2025 retrieval evaluation, FloTorch’s 2026 benchmarks, and practitioner documentation. All cited statistics are attributed to their source. Code examples are illustrative and should be adapted to your stack. The author has no affiliation with any referenced tool or vendor. This article contains affiliate links — purchasing through them supports this blog at no extra cost to you.

Comments

Loading comments...