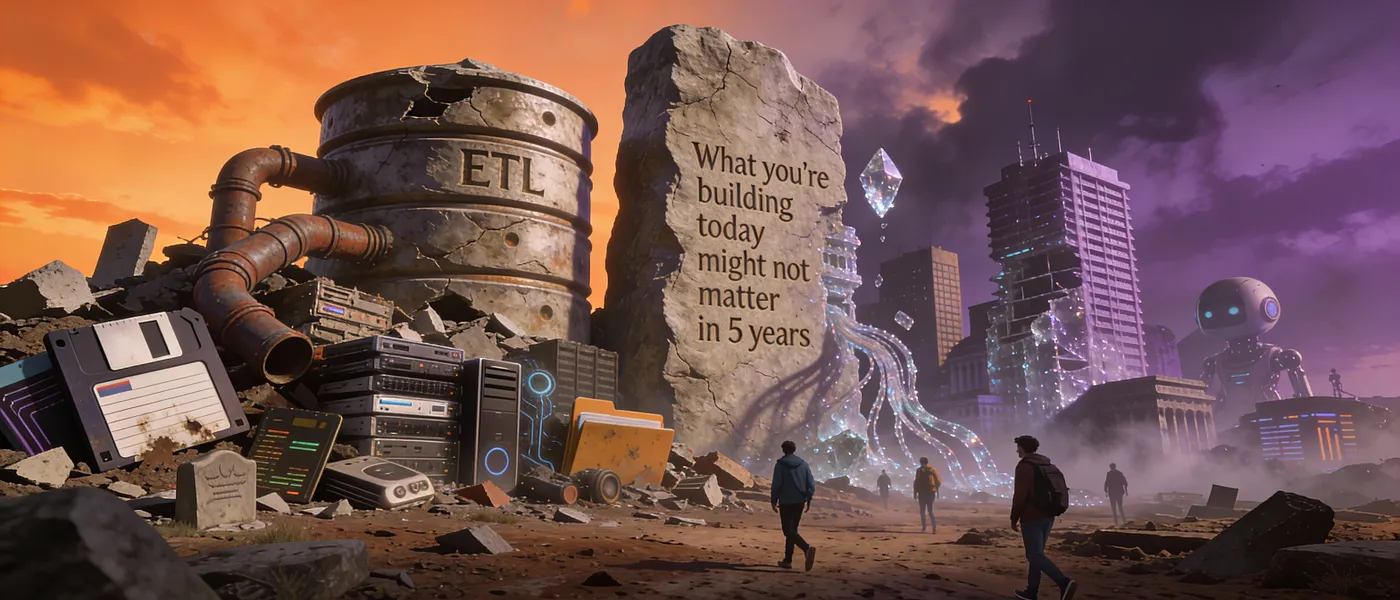

A controversial thesis on why ETL, data warehouses, and the entire modern data stack are about to become as obsolete as floppy disks — and what’s coming to replace them

I’m about to make a career-limiting prediction: By 2030, the job “data engineer” as we know it today will no longer exist.

Not because we won’t need data infrastructure. But because the entire conceptual framework we’ve built over the last 20 years — ETL, data warehouses, data lakes, the modern data stack — will have collapsed under the weight of its own complexity.

And something radically simpler will have taken its place.

This isn’t doom and gloom. It’s evolution. And if you’re reading this, you have a five-year window to position yourself on the right side of history. Let me show you why.

The Uncomfortable Truth About Our Current Architecture

Why we built a Rube Goldberg machine when we thought we were building the future

Here’s the data stack you’re probably running right now:

Production DB (Postgres)

↓

Fivetran/Airbyte (ETL)

↓

Snowflake/BigQuery (Warehouse)

↓

dbt (Transformation)

↓

Cube/Looker (Semantic Layer)

↓

Tableau/Metabase (BI)

↓

Reverse ETL (Back to production)Stop and really look at this. We’re copying data across six different systems just to answer the question “how many users signed up today?”

This is insane.

We didn’t design this deliberately. It emerged organically because:

- Operational databases weren’t fast enough for analytics (2000s problem)

- Analytical databases weren’t good for transactions (still true, but…)

- Raw data needed transformation (true, but why in a separate system?)

- Business users needed a semantic layer (why not just… better databases?)

- ML models needed features back in production (completing the circle of madness)

Each layer was added to solve a real problem. But the cumulative complexity has become the bigger problem.

My bet: By 2028, this entire stack collapses into 2–3 systems. Maybe just one.

Prediction #1: The Transactional-Analytical Database Merge

Why the OLTP/OLAP divide will disappear in our lifetime

The fundamental assumption of modern data architecture is that you need separate systems for:

- OLTP (transactional): Fast writes, row-based, normalized

- OLAP (analytical): Fast reads, column-based, denormalized

This made sense when hardware was expensive and specialized. But that world is dying.

Look at what’s happening:

SingleStore : Already running transactions and analytics on the same database. Sub-second queries on billions of rows while handling 100K+ writes per second.

DuckDB : Embedded analytical database that’s making OLAP as easy as SQLite made OLTP.

ClickHouse : Originally analytical, now adding transactional features.

TiDB : Transactional database that handles analytical queries without separate systems.

The pattern is clear: databases are becoming omni-operational . One system that handles everything.

Here’s what I think happens:

# 2025: Current architecture

class UserService:

def create_user(self, email):

# Write to production DB

prod_db.execute("INSERT INTO users...")

# Wait 4 hours for Fivetran to sync

# Wait 2 hours for dbt to transform

# Wait 1 hour for cache to refresh

# Finally: Analytics is 7 hours stale# 2028: Future architecture

class UserService:

def create_user(self, email):

# Write to unified database

db.execute("INSERT INTO users...")

# Analytics is instantly available

# No ETL. No warehouse. No delay.

# One transaction, visible everywhere immediately.What this means:

- ETL tools (Fivetran, Airbyte) lose 60% of their use cases

- Data warehouses become optional, not required

- The entire “modern data stack” becomes a legacy pattern

- Real-time becomes the default, not the exception

My timeline: First Fortune 500 company publicly abandons Snowflake for a unified database by 2027.

Further reading:

- The End of OLAP vs OLTP by SingleStore

- DuckDB: The Swiss Army Knife of Data Tools

Prediction #2: AI Will Write Your Data Pipelines (But Not How You Think)

The real disruption isn’t GitHub Copilot, it’s something far more fundamental

Everyone’s worried about AI replacing data engineers. That’s the wrong fear. AI isn’t going to replace data engineers by writing better Python code. It’s going to replace data engineers by eliminating the need for data pipelines entirely .

Let me explain.

Today, when you want to add a new data source, you:

- Study the source API/schema (2 hours)

- Write extraction code (4 hours)

- Handle pagination, rate limiting, errors (3 hours)

- Write transformation logic (6 hours)

- Add data quality checks (4 hours)

- Write tests (3 hours)

- Set up monitoring (2 hours)

- Deploy and maintain (ongoing)

Total: 24+ hours for a single data source.

Now imagine this:

# 2025: Traditional approach

from airflow import DAG

from custom_extractors import SalesforceExtractor

from dbt_runner import run_dbt# 300 lines of code, 10 failure modes, 5 edge cases...

# 2027: AI-native approach

from autonomous_data_platform import DataSource

salesforce = DataSource.connect(

"Salesforce",

credentials=env.SALESFORCE_API_KEY,

intent="sync all opportunity data for revenue analytics"

)

# That's it. The AI:

# - Discovers the schema automatically

# - Infers relationships between objects

# - Suggests transformations based on similar pipelines

# - Generates quality checks from data patterns

# - Handles errors autonomously

# - Evolves as the source changesBut here’s where it gets wild: The AI doesn’t just write pipelines. It eliminates them.

Instead of:

Source → ETL → Warehouse → Transform → BIWe get:

Source → AI Agent → Query ResultThe AI agent:

- Queries sources directly when needed

- Caches intelligently based on access patterns

- Joins across systems in real-time

- Learns from each query to optimize the next

No pipelines. No schedules. No maintenance. Just queries.

Case study that makes me believe this:

I watched a startup demo an AI data agent last month. You could ask: “What’s our customer churn rate by cohort?” and it would:

- Identify which tables contained customer data (across 3 systems)

- Determine what “churn” means from past queries and documentation

- Generate SQL to calculate churn by cohort

- Execute it

- Return results with confidence intervals

Time to insight: 12 seconds. No pipeline needed.

My bet: By 2029, 50% of data pipelines at tech companies are replaced by AI agents that query on-demand.

Further reading:

- The AI Agent Approach to Data Integration

- Foundation Models for Data Management (Research paper)

Prediction #3: The Semantic Layer Eats Everything

Why metrics layers will become more valuable than the data they query

Here’s a question that keeps me up at night: What’s more valuable — the raw data or the definitions of what that data means?

We’ve spent 20 years optimizing data storage and processing. But we’ve barely scratched the surface of data semantics — the actual meaning of the data.

Consider “revenue.” Simple concept, right? But in a real company:

# Marketing's definition

revenue_marketing:

sql: SUM(amount) FROM transactions WHERE status = 'complete'

# Finance's definition

revenue_finance:

sql: SUM(amount) FROM transactions

WHERE status IN ('complete', 'processing')

AND refunded = false

AND type != 'internal'

# Sales's definition

revenue_sales:

sql: SUM(commission_amount) FROM deals WHERE closed = true# Accounting's definition

revenue_accounting:

sql: [300 lines of GAAP-compliant SQL with accrual logic]Same word, four different numbers. This is the real problem in data.

The semantic layer revolution:

Companies are realizing the definitions are more valuable than the data itself. Because:

- Data without definitions is noise

- Definitions are reusable across tools

- Definitions encode business logic

- Definitions enable collaboration

Here’s where I think this goes:

# 2025: Data warehouse-centric worldData Warehouse → BI Tool# 2028: Semantic layer-centric world

┌─→ BI Tool

Metrics │

Layer ├─→ Reverse ETL

├─→ ML Features

├─→ Internal APIs

└─→ AI Agents

# The semantic layer becomes the source of truth

# The warehouse becomes just another data sourceWhy this matters:

Metrics layers like Cube, Transform, and dbt Metrics are positioning themselves as the new center of the data stack. Not the warehouse. The definitions .

Imagine a world where:

- You define “active user” once, use it everywhere

- ML models pull features from the same semantic layer as dashboards

- AI agents query metrics, not raw tables

- Reverse ETL syncs semantic objects, not database rows

Bold prediction: By 2030, startups will launch with a semantic layer before they have a data warehouse. The definitions come first; storage is just an implementation detail.

Further reading:

- The Semantic Layer: Metrics Layer Manifesto

- Why We Built Transform

Prediction #4: Edge Computing Will Decentralize Data (Again)

Why centralization was a temporary detour, not the destination

The history of computing is a pendulum:

- 1960s-70s: Mainframes (centralized)

- 1980s-90s: PCs (decentralized)

- 2000s-10s: Cloud (centralized)

- 2020s-30s: Edge (decentralized again)

We’re about to swing back to decentralization, and it will reshape everything about data architecture.

Why edge computing changes the game:

Today, data flows like this:

IoT Device → Cloud → Processing → Storage → Analytics

↓

~500ms latency

~$$$$ bandwidth costs

~privacy concernsTomorrow:

IoT Device → Edge Processing → Local Analytics → Selective Cloud Sync

↓

~5ms latency

~$ bandwidth costs

~privacy by defaultReal example: Tesla’s self-driving doesn’t send raw video to the cloud for processing. It would be:

- Too slow (you’d be dead before the cloud responded)

- Too expensive (exabytes of video data)

- Too privacy-invasive

Instead, they process at the edge and send only insights to the cloud.

This pattern will spread everywhere:

- Retail: Analytics at the store, not the data center

- Manufacturing: Process monitoring at the factory edge

- Healthcare: Patient data processed locally, only aggregates in cloud

- Smart cities: Traffic optimization at intersection, not HQ

What this means for data engineers:

The entire cloud-first architecture inverts. Instead of:

# Current: Pull everything to the center

def analyze_sensor_data():

raw_data = fetch_from_all_sensors() # Pull terabytes

processed = process_in_cloud(raw_data)

insights = generate_insights(processed)

return insightsWe get:

# Future: Process at the edge, sync intelligently

class EdgeAnalytics:

def __init__(self):

self.local_model = load_model()

self.local_cache = EdgeCache()

def process_sensor_data(self, data):

# Process locally

insights = self.local_model.predict(data)

# Only sync insights, not raw data

if insights.confidence < 0.95:

cloud_sync(insights, data_sample=data[:1000])

return insights# The edge becomes the primary compute layer

# Cloud becomes backup and coordinationMy bet: By 2028, more data will be processed at the edge than in the cloud.

Timeline:

- 2025: Edge-first architectures become standard for IoT

- 2027: Major enterprises start repatriating data to edge

- 2030: “Cloud-native” sounds as outdated as “mainframe-based”

Further reading:

- The Edge Computing Opportunity

- Cloudflare’s Bet on Edge Computing

Prediction #5: SQL Will Outlive Every Fancy Alternative

The most controversial take: Stop trying to replace SQL. You won’t win.

Every few years, someone declares “SQL is dead” and launches a replacement:

- 2010: “NoSQL will replace SQL!”

- 2015: “Pandas DataFrames are the new SQL!”

- 2020: “GraphQL is killing SQL!”

- 2025: “AI will make SQL obsolete!”

Here’s the thing: They’re all wrong.

SQL is 50 years old. It has survived:

- Object-oriented databases

- XML databases

- NoSQL

- NewSQL

- Streaming processors

- Graph databases

- Time-series databases

- Every startup that promised to “democratize data without SQL”

Why? Because SQL has three superpowers:

- It’s declarative: You say what you want, not how to get it.

-- What you want

SELECT users.name, COUNT(orders.id)

FROM users

JOIN orders ON users.id = orders.user_id

WHERE orders.created_at > '2024-01-01'

GROUP BY users.name;-- vs. how to get it (imperative)

users = read_table('users')

orders = read_table('orders')

filtered_orders = orders[orders.created_at > '2024-01-01']

joined = merge(users, filtered_orders, left_on='id', right_on='user_id')

grouped = joined.groupby('name').agg({'id': 'count'})

# ... etc-

It’s universal: Every database speaks SQL (or a dialect). Python? Only Python tools understand it.

-

It’s optimizable: Databases can optimize SQL automatically. Code? You optimize it manually.

The future I see:

SQL doesn’t die. It evolves:

-- 2025: Traditional SQL

SELECT user_id, AVG(purchase_amount)

FROM purchases

WHERE purchase_date > '2024-01-01'

GROUP BY user_id;-- 2028: AI-enhanced SQL

SELECT user_id,

AVG(purchase_amount),

PREDICT_CHURN(user_id) as churn_probability, -- ML built-in

EXPLAIN_ANOMALY(purchase_amount) as why_unusual -- AI explains outliers

FROM purchases

WHERE purchase_date > '2024-01-01'

GROUP BY user_id;

-- 2030: Natural language → SQL → Results

-- User: "Show me users likely to churn"

-- AI: [Generates optimized SQL with ML functions]

-- Database: [Returns results with explanations]SQL becomes the compilation target for AI-generated queries. The human-readable query language evolves, but SQL remains the execution layer.

My controversial take: Startups building “SQL replacements” are dead wrong. Build tools that make SQL better , not tools that avoid it.

Further reading:

- Why SQL is Beating NoSQL

- Modern SQL in PostgreSQL

Prediction #6: The Great Re-Centralization

Why data mesh was a detour and we’re going back to centralization (but smarter this way)

Remember when everyone was excited about data mesh? Decentralize everything! Domain ownership! Federated architectures!

I think we’re about to realize it was a mistake.

Not because the ideas were wrong. But because distributed systems are really, really hard , and asking every domain team to run their own data infrastructure is like asking every department to run their own IT.

What actually happens with data mesh:

Theory:

Domain A → Clean, well-governed data product

Domain B → Clean, well-governed data product

Domain C → Clean, well-governed data product

Reality:

Domain A → Outdated documentation, breaking changes, no monitoring

Domain B → Different naming conventions, inconsistent quality

Domain C → "The person who built this left, nobody knows how it works"The problem: You’ve multiplied your data engineering complexity by the number of domains.

The correction:

We’re going back to centralization, but with lessons learned:

# Not this (old centralized):

Central data team owns everything

└─ Bottleneck, slow, can't scale# Not this (data mesh):

Every domain team owns their data

└─ Chaos, inconsistency, duplicated effort

# This (smart centralization):

Central platform team provides:

- Self-service tools with guardrails

- Automated governance

- Standardized patterns

- 24/7 monitoring

Domain teams use platform to:

- Publish data products easily

- With automatic quality checks

- With consistent semantics

- With zero infrastructure managementThink: Platform as a Product, not Data as a Product.

My prediction: By 2027, companies that went all-in on data mesh will quietly re-centralize. They won’t call it that — they’ll call it “platform engineering” or “data products platform” — but it’s centralization 2.0.

Further reading:

- Data Mesh: A Skeptical View

- The Return to Centralized Data Platforms

The Endgame: What We’re Actually Building Toward

One database, one semantic layer, AI agents, and nothing else

Let me paint you a picture of 2030:

The architecture that replaces everything:

┌─────────────────────────────────────────┐

│ AI Data Agent Layer │

│ (Understands intent, generates queries) │

└─────────────────┬───────────────────────┘

│

┌─────────────────▼───────────────────────┐

│ Semantic Layer │

│ (Single source of truth for metrics) │

└─────────────────┬───────────────────────┘

│

┌─────────────────▼───────────────────────┐

│ Unified HTAP Database │

│ (Handles transactions + analytics) │

└──────────────────────────────────────────┘That's it. Three layers:

1. AI agents that understand questions

2. Semantic definitions that encode meaning

3. A database that does everythingWhat disappears:

- ETL tools (data never leaves the source)

- Data warehouses (unified database handles both)

- dbt (transformations happen in the semantic layer)

- Reverse ETL (no copying needed)

- Data catalogs (semantics are built-in)

- Most data engineering (automated by AI)

What emerges:

- Semantic engineers (defining meaning, not moving data)

- AI orchestrators (teaching agents about your business)

- Data product managers (treating data as products)

- Real-time by default (no batch processing)

So What Do You Do Now?

How to position yourself for the next decade

If you’re a data engineer reading this, you have two choices:

Option 1: Fight the future

- Keep building ETL pipelines

- Defend the modern data stack

- Insist batch processing is fine

- Age out of relevance by 2030

Option 2: Ride the wave

- Learn semantic modeling (metrics layers, data contracts)

- Experiment with unified databases (DuckDB, SingleStore, ClickHouse)

- Understand AI agents (how to teach them about data)

- Position yourself as a semantic engineer, not a pipeline builder

Concrete actions for the next 12 months:

Q1 2026: Pick a unified database and rebuild one pipeline without ETL

# Instead of: Postgres → Fivetran → Snowflake → dbt

# Try: Postgres → Materialize (or SingleStore, or ClickHouse)

# Learn: Can you eliminate the middle steps?Q2 2026: Implement a semantic layer for your most critical metrics

# Define revenue, churn, activation once

# Use everywhere: dashboards, APIs, ML

# Practice: Semantic-first thinkingQ3 2026: Experiment with AI-generated data queries

# Use: GPT-4 + your schema to generate SQL

# Learn: What works, what breaks

# Understand: How to give AI better contextQ4 2026: Write about what you learned

- Blog posts comparing old vs new approaches

- Case studies of simplified architectures

- Predictions (like this article!)

- Build your reputation as a forward thinker

The meta-skill: Rapid prototyping of data architectures.

The data stack is about to fragment, experiment, and reconsolidate. The winners will be those who can quickly test new approaches, learn what works, and influence which patterns win.

My Personal Bet

Where I’m putting my money (and career)

I’m going all-in on:

-

Semantic layers — The definitions become more valuable than the data.

-

Unified databases — OLTP/OLAP convergence is inevitable.

-

AI orchestration — Teaching AI to understand business context is the new data engineering.

I’m betting against:

- Complex multi-hop data pipelines

- Massive cloud data warehouses

- Batch-first architectures

- “Data engineer” as a long-term job title

If I’m right: In five years, “data engineer” sounds as dated as “webmaster” does today. We become semantic engineers, AI orchestrators, and data product managers.

If I’m wrong: I’ve wasted time learning tools that don’t matter, and the modern data stack continues for another decade.

But here’s the thing: Even if I’m only 30% right, the changes are significant enough to matter.

The Real Question

This article is full of predictions. Some will be right, some will be spectacularly wrong. That’s fine — the goal isn’t to be correct about every detail, but to think deeply about where we’re heading .

So here’s my question for you:

What are you building today that will still matter in five years?

Are you building pipelines that will be automated away? Or are you building the semantics, the context, the business understanding that AI can’t easily replicate?

The next five years will separate data engineers who can evolve from those who can’t. The technical skills matter less than the ability to see around corners.

Choose wisely.

Further Reading & Resources

Books to read now:

- The Future of Data — O’Reilly’s take on where data is heading

- Staff Engineer — How to stay relevant as tech evolves

Companies to watch:

- Materialize — Streaming SQL database

- SingleStore — Unified transactional + analytical

- DuckDB — Embedded analytics

- Cube — Semantic layer platform

- Malloy — Google’s semantic modeling language

Podcasts:

- The Data Engineering Show

- Analytics Engineering Podcast

Communities:

- Data Engineer Club on Discord

- dbt Slack

Disagree with everything I wrote? Good. That means you’re thinking critically. Drop a comment with your counter-predictions — I want to know where YOU think this is all heading.

And if you found this valuable, share it with someone who needs to think about the future of their career in data.

To understand where databases are going, you need to understand where they came from. Designing Data-Intensive Applications is the definitive guide to how storage engines, replication, and distributed systems actually work. Essential reading for anyone building data infrastructure.

Comments

Loading comments...