#local-inference

2 articles

-

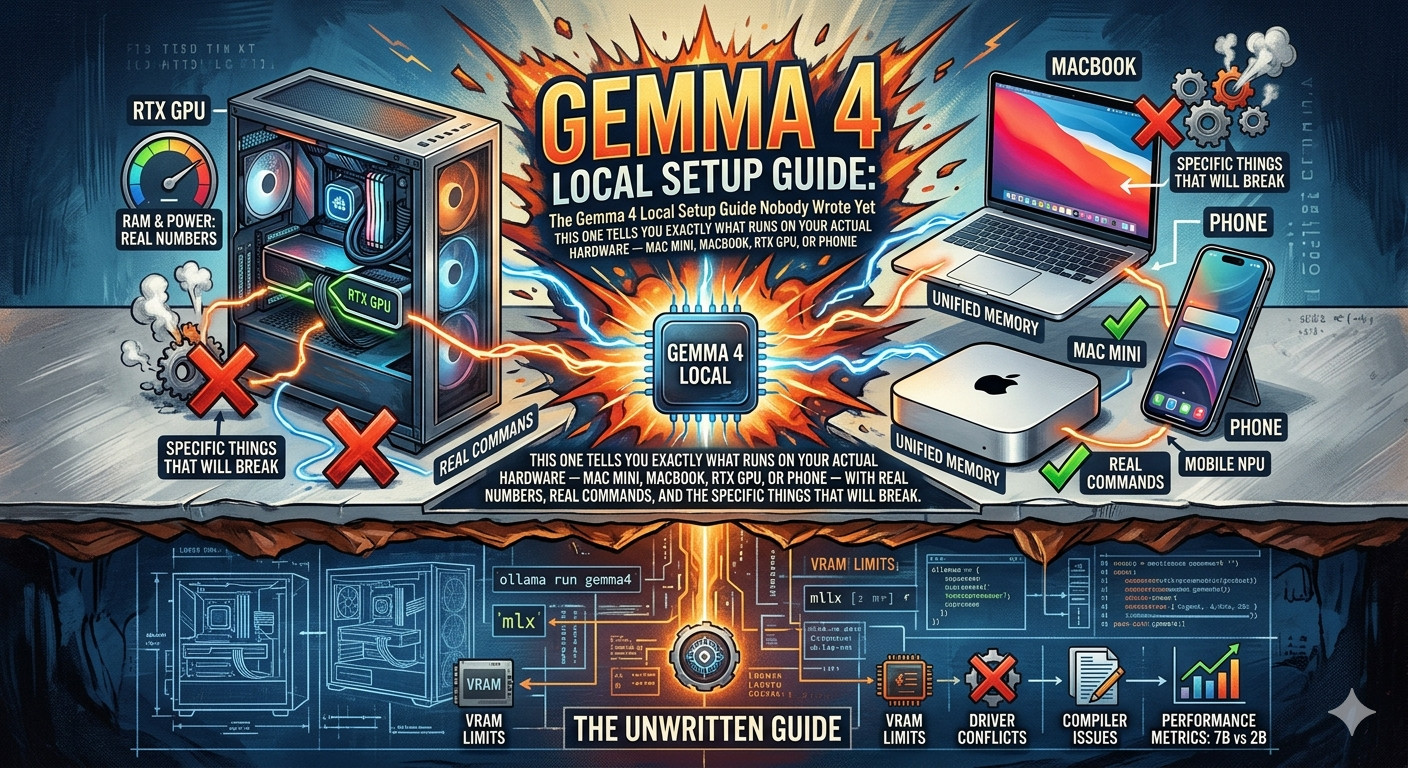

The Gemma 4 Local Setup Guide Nobody Wrote Yet

Hardware-specific guide to running Gemma 4 locally: which model fits your Mac/GPU, Ollama vs MLX, Apple Silicon memory tuning, real tok/s numbers, and troubleshooting the things that actually break.

-

Gemma 4: The Pocket Rocket That Wants to Kill Your API Bill

Google DeepMind's Gemma 4 brings frontier-level reasoning to local hardware under Apache 2.0: 89.2% AIME, 80% LiveCodeBench, runs on phones to Mac minis, with native function calling and 256K context.