The Harness Is Everything

What Cursor, Claude Code, and Perplexity actually built — and why the model was never the real product.

AI Engineering | Architecture | Developer Tools | April 2026

~14 min read

Disclaimer: This article is an independently written analysis of harness engineering in AI systems. All statistics and benchmarks cited are sourced from publicly available research (SWE-agent / NeurIPS 2024, Latent Space, Medium, and Coalesce 2026). Code examples are illustrative and not production-ready. No vendor endorsement is implied or intended.

The conversation nobody’s having correctly

There is a conversation happening right now across every engineering Slack, every AI-adjacent team standup, and every conference hallway that goes something like this: somebody shows off a tool that feels almost magic, a coding agent that ships whole features in minutes, and then somebody else says, “yeah, but with a different model it was useless.” Both people are right. And neither one quite understands why.

The answer isn’t hiding in a model card or a benchmark. It isn’t a temperature trick or a system-prompt secret. The answer — one that a growing number of serious AI engineers are converging on with surprising unanimity — is the harness. The environment the model lives inside. Not the brain, but the body.

It’s the same argument that keeps surfacing when you look carefully at what Anthropic built to make Claude Code work, what OpenAI built to ship one million lines of code with Codex, and what Princeton’s NLP group discovered when they ran the same model through two different interfaces on the same benchmark. Same weights. Radically different results.

“You are not using AI wrong because you haven’t found the right model. You are using AI wrong because you haven’t built the right environment.”

That framing cuts straight to the problem. The AI industry has spent two years arguing about which model is smarter, which lab is winning, which benchmark matters. Meanwhile, the teams actually shipping products at scale have quietly moved the interesting work somewhere else entirely.

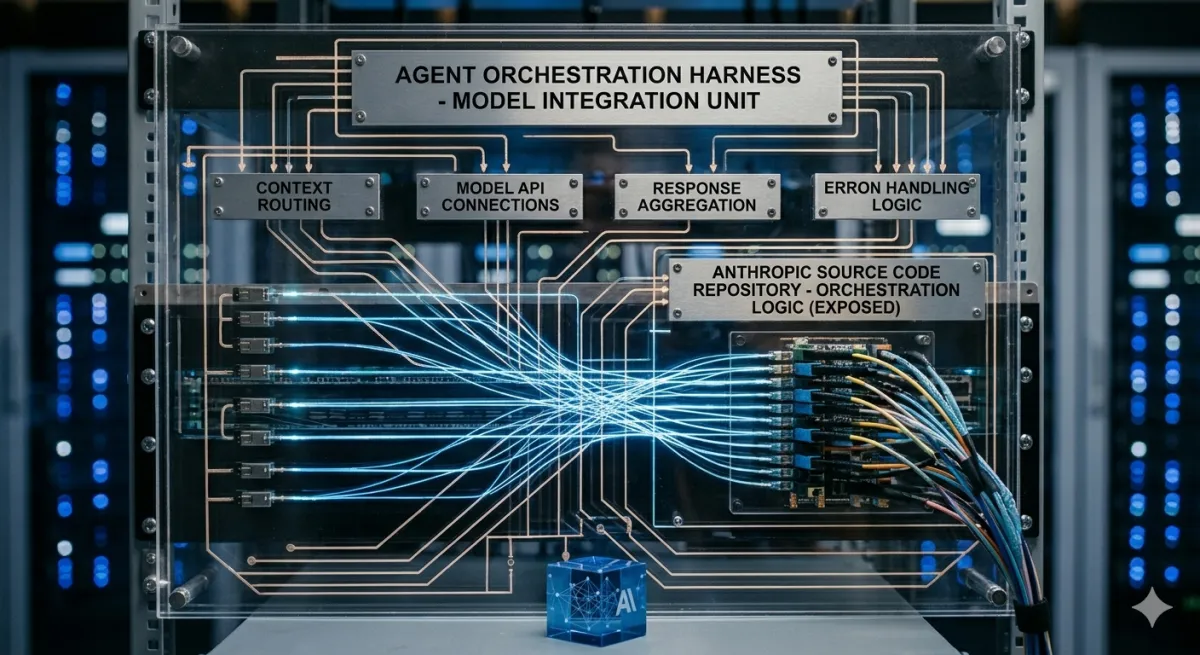

What a harness actually is

The word gets used carelessly enough that it’s worth being precise. A harness is not a system prompt. It’s not a wrapper around an API call. It’s not a framework or a library or a “smart chatbot with memory.”

A harness is the complete designed environment inside which a language model operates — the tools it can invoke, the format of information it receives, how its conversation history is compressed and managed, the guardrails that catch its mistakes before they cascade, the feedback loops that let it observe the consequences of its actions, and the scaffolding that allows it to hand off work to its future self without losing coherence across long tasks.

The metaphor is deliberately borrowed from engineering: in electrical testing, a harness connects components while isolating them from each other. In climbing, the harness transfers load from the body’s weakest points to its strongest. In both cases, the harness doesn’t do the work — it governs how the work gets done. Martin Fowler’s team made the same point about coding agents: reins, saddle, bit — the complete equipment for channeling a powerful but unpredictable animal in a useful direction.

| Metric | Value | Context |

|---|---|---|

| GPT-4 Turbo on SWE-bench with standard bash shell | 3.97% | Baseline interface |

| Same GPT-4 Turbo with Princeton’s purpose-built ACI harness | 12.47% | Custom Agent-Computer Interface |

| Performance improvement | 3x | Same model, different environment |

Those three numbers come from the SWE-agent paper out of Princeton NLP, published at NeurIPS 2024. The task was real software engineering work: given a GitHub issue description, produce a code patch that resolves it. The model didn’t change. The researchers gave it a purpose-built Agent-Computer Interface instead of a generic shell, with tailored file navigation commands, linting feedback baked into every edit step, and search tools designed to prevent context flooding. The interface — the harness — tripled performance without touching the model at all.

The core loop nobody talks about

Here’s the thing that becomes obvious once you look at Claude Code, Cursor’s agent mode, and Manus side by side: they are all, at their architectural core, the same two dozen lines of logic.

# Every production coding agent -- Cursor, Claude Code, Manus -- runs this loop.

# The harness is everything that happens INSIDE each step.

async def agent_loop(task: str, harness: Harness) -> Result:

context = await harness.build_context(task) # scoped, not dumped

history = []

while True:

response = await model.call(

messages=history + [context],

tools=harness.available_tools() # filtered, not full universe

)

if response.is_done():

return harness.validate_and_deliver(response)

for call in response.tool_calls:

result = await harness.execute(call) # sandboxed execution

harness.lint_if_code(result) # deterministic gates

history.append((call, result)) # compressed, not raw

if harness.retry_limit_exceeded(history):

return harness.escalate_to_human(history) # Stripe caps at 2That’s it. The entire architecture of Claude Code, Cursor’s agent, and Manus fits inside that loop: while the model returns tool calls, execute the tool, capture the result, append to context, call the model again. The model is the for-loop body. The harness is everything else — and “everything else” is where the real engineering lives.

What Anthropic built (and what they admitted)

Claude Code’s architects — Boris Cherny and Cat Wu — have been publicly candid in a way that cuts against the model-hype narrative. In repeated interviews, they’ve described Claude Code as deliberately the thinnest possible wrapper over the model, rewritten from scratch roughly every three to four weeks. The “secret sauce,” in their words, is entirely in the model. Their job is to build the minimal environment that lets the model express what it already knows how to do.

That sounds like the anti-harness argument. It isn’t. A minimal harness is still a harness — it just means Anthropic has decided that the model is capable enough that heavy scaffolding gets in the way. But even the minimal version encodes critical design decisions: what startup context the agent receives at the beginning of every session, how conversation history gets compressed to prevent drift, which tools are available and in what format, and where human review is required versus optional.

Claude Code went from zero to the most-used AI coding tool in eight months, overtaking both GitHub Copilot and Cursor in usage, with 46% developer satisfaction versus 19% and 9% respectively. That didn’t happen because Anthropic’s models are uniquely better. It happened because the model and the harness were built by the same team with the same understanding of each other’s constraints.

“The model is almost irrelevant. The harness is everything. Every failure is a signal about what the environment needs — not what the model needs.”

The MCP problem: when tools become a firehose

One of the sharper observations in this space concerns the MCP (Model Context Protocol) ecosystem that Anthropic introduced and the industry quickly embraced — and then ran into a wall with at scale.

The problem is cognitive overload. MCP works cleanly in demos. Load a GitHub MCP server with 90-plus tools and you’re pushing 50,000 tokens of JSON schemas into the model’s context window before it has read a single line of the user’s actual request. The model isn’t reasoning about the task at that point — it’s trying to memorize a manual. Accuracy drops as tool count rises, and it drops fast when those tools get chained.

The fix Anthropic shipped wasn’t a patch to MCP. It was a different relationship between model and tools altogether — an approach the community has started calling “Skills,” which function like RAG applied to tooling rather than documents:

# Old approach: dump everything into context

# tools_payload = json.dumps(ALL_90_MCP_TOOLS) <- 50k tokens

# model.call(system=tools_payload + task) <- firehose

# New approach: Skills = lazy-loaded tool retrieval

class SkillsLayer:

def __init__(self, tool_registry):

# Agent sees only lightweight metadata initially

self.metadata_index = {

name: {'description': tool.description, 'tags': tool.tags}

for name, tool in tool_registry.items()

}

async def resolve(self, task_intent: str) -> list[Tool]:

# Context is PULLED, not pushed

# Agent reads full schema only for relevant tools

relevant = await semantic_search(

query=task_intent,

index=self.metadata_index,

top_k=5 # 5 tools vs 90 -- massive difference

)

return [full_schema(t) for t in relevant]

# Skills don't replace MCP. They domesticate it.

# A 50k-token dump becomes a targeted 3k-token retrieval.The architecture lesson generalizes: the harness must manage what the model sees, not just what the model does. Feeding a capable model bad or overwhelming input is as damaging as giving it no tools at all. Attention is the scarce resource, and the harness is the attention budget manager.

How the big teams actually do it

Across a two-week window in February 2026, several of the most serious engineering organizations in the industry showed their cards simultaneously.

Stripe revealed its Minions system, merging 1,300-plus AI-written pull requests per week. Perplexity launched Computer, a multi-model orchestration engine delegating across 19 specialized AI models. Anthropic shipped Cowork, a desktop agent that triggered a reported $285 billion selloff in enterprise software stocks.

What’s striking is how convergent the underlying patterns are, despite these being very different products from very different organizations:

| Layer | What It Does | The Harness Decision |

|---|---|---|

| Task routing | Decide what gets automated vs. escalated | The whole game — get this right and unlock agent-pace development for the majority of tickets |

| Context scoping | What the agent can see | Stripe uses directory-scoped rule files; the agent cannot access what it cannot see in-context |

| Deterministic gates | Quality enforcement between steps | Run the linter automatically. Run the tests. Cap retries at 2. If it can’t fix in 2, human in |

| Lifecycle management | Preventing drift across long tasks | Context compression, standardized startup sequences, history summarization |

| Feedback loops | What the agent observes after acting | Puppeteer for end-to-end browser verification; without it, bugs invisible from code pass undetected |

| Human gates | Where review sits in the workflow | Plan approval before, output review after — nowhere in between |

Perplexity’s own data shows no single model commanding more than 25% of enterprise usage by December 2025. The model is interchangeable. The harness is the product.

The trust problem at scale

There’s a failure mode that takes a while to surface but is consistently the most painful one to debug: confidence compounding.

When Agent A writes code with high confidence and Agent B writes tests with high confidence and the tests pass — who verified that the tests actually test the right thing? The pipeline looks green. The output reaches its audience. A subtly wrong fact, a slightly off business logic, a test that checks the wrong invariant — all of these travel through the system wearing the costume of validated output.

The harness has to solve this too, and most early implementations simply don’t. Scepticism doesn’t propagate automatically through a multi-agent system. It has to be designed in at every handoff point.

Harness design for trust — practical checklist:

- Linting at every edit. Not as an optional step — as a hard gate. The SWE-agent ablation studies showed linting integration was consistently among the highest-leverage components

- Retry caps. Stripe caps at two attempts. A third attempt on the same failure almost never produces a different result — it just burns tokens and creates a false sense of progress

- Scope isolation. Each agent task runs in an isolated sandbox, pre-warmed, with no production access by default

- End-to-end feedback. Give agents access to the full domain they’re affecting, not just the code. Browser automation that lets the agent see what a user would see catches entire classes of bugs that code review misses

- Human review at the boundary. Approve the plan before the agent starts. Review the output after it finishes. No human dependency anywhere in between — that just makes agents slower than a developer

- Audit trails on everything. What was generated, by which model, with what context, at what cost. This isn’t optional when agents are contributing to production systems

Harness engineering at scale — by codebase size

One of the most practically useful frameworks to emerge from the 2026 wave of harness thinking is a tiered model based on codebase size. The harness you need for a 50,000-line project is genuinely different from the one you need for a 10-million-line one — not in philosophy, but in complexity and investment.

Under 100K lines: use the agent

If your codebase fits in a context window, you don’t need a custom harness. Use Claude Code, set up a CLAUDE.md that encodes your architecture decisions, naming conventions, and testing expectations clearly, and invest in test coverage so the agent can self-correct. The real work at this scale is writing good context, not building infrastructure.

# CLAUDE.md -- your harness at small scale

# This file IS the harness at sub-100k line projects

## Architecture

# - Services follow hexagonal architecture (domain/ports/adapters)

# - No direct DB calls from controllers -- always go through repositories

# - Async-first: all IO must be awaitable

## Testing

# - Unit tests for all domain logic (no mocks for external)

# - Integration tests hit real DB (testcontainers)

# - New feature = test first. No exceptions.

## Conventions

# - Error types live in errors/ -- never raise raw exceptions

# - Feature flags via flags.py -- no hardcoded conditionals

# - All env vars documented in .env.example

## Before submitting any code

# 1. Run: pytest tests/ -x --tb=short

# 2. Run: ruff check . && mypy src/

# 3. Verify no TODO comments remain in changed files100K-1M lines: the thin custom harness

This is where most of the industry’s actual opportunity sits. The codebase now exceeds a single context window. Conventions are too complex for a flat file. Generic agents get things subtly wrong with domain logic they can’t fully see.

The fix is a thin harness you write yourself — handling three things and only three things: task routing (which tickets are safe for autonomous execution), context scoping (selecting the relevant modules and rules rather than the whole codebase), and constraint enforcement (linting, testing, and retry caps that run without human involvement).

MIT’s Missing Semester course now teaches that the core concepts of a tool-calling agent harness can be implemented in 200 lines of code. Those 200 lines encode team domain knowledge, risk tolerance, and quality standards. Nobody else can write them — which is exactly what makes them defensible.

1M+ lines: platform engineering

The problem at this scale changes from “how does one team use agents” to “how do twenty teams use agents with different codebases, conventions, and risk profiles.” The investment here is fundamentally different: environment provisioning, multi-agent coordination, governance, and — before any of it — test infrastructure.

Three million tests sound excessive until you realize they are the foundation Stripe needed before 1,300 autonomous pull requests per week became possible. The tests come before the agents. Always.

The big model vs. big harness debate

There’s an honest tension worth naming here. Anthropic’s own team argues that Claude Code’s power comes from the model, not the scaffolding — and they’ve kept the harness deliberately minimal as evidence. OpenAI’s Noam Brown has argued publicly that reasoning models will eventually make scaffolding obsolete, the same way reasoning models replaced the complex agentic chains people built before they existed.

Both positions have merit. Latent Space’s swyx acknowledged a long-standing respect for the Bitter Lesson but increasingly recognized that “Harness Engineering” has real value, particularly as the Agent Labs thesis played out with Cursor reaching a $50B valuation.

The honest synthesis is probably this: model capability and harness design are complements, not substitutes. A better model makes a good harness better. But a weak harness caps what even a great model can do. And for teams shipping in production right now, harness improvements are faster, cheaper, and more controllable than waiting for the next model release.

LangChain’s coding agent jumped from the top 30 to the top 5 on Terminal Bench by changing nothing about the model — only the harness. Same weights. Different environment. That’s not a fluke; it’s a pattern that recurs across every serious deployment analysis of 2025 and 2026.

“Every AI company has access to the same model weights. Some agents feel magical and others feel broken. The model was never the moat. The harness and the context are.”

What this means if you’re building

The shift in where valuable engineering work lives is already underway, and it has concrete implications for what skills to develop and where to invest.

Context engineering is the new prompt engineering. The question isn’t “how do I phrase this?” It’s “what does this agent need to know, in what format, at what point in the task?” Writing a CLAUDE.md or an equivalent context file for your codebase is now as important as writing documentation — and arguably more immediately impactful.

Deterministic gates are your safety net. The linter, the test suite, the type checker — these are not obstacles to agent development. They’re the guardrails that make aggressive agent autonomy safe. Every team running agents at speed has invested heavily in automated quality enforcement, not to slow agents down, but to let them go faster with confidence.

Feedback loop design is the highest-leverage work. The quality of an agent’s work is bounded by the quality of its feedback loops. If an agent cannot observe the consequences of its actions in the domain that matters, it will optimize for proxy metrics that may not correlate with actual correctness. Giving agents access to end-to-end verification — running the actual application, not just the tests — consistently unlocks the largest performance jumps.

The human’s job is changing shape, not disappearing. When something fails in a well-designed harness, the diagnostic question shifts from “how do I fix this bug?” to “what structural piece of the environment is missing that is causing this bug to appear?” That’s a different kind of thinking — more systems design, less code writing. The engineers adapting fastest are the ones who’ve already started thinking that way.

Build for the 15%, not the 85%. A harness that handles the confident 85% autonomously and escalates the ambiguous 15% to humans is a compounding advantage. The 85% runs at agent speed. The 15% gets human quality. The whole system improves as the escalation boundary gets clearer over time.

There’s a version of this conversation that turns into another wave of hype — harness engineering as the new buzzword, another concept to add to the pile. That would be a waste. The core idea is actually simple and old: tools work better when the environment they’re used in is designed for them. Surgeons have operating rooms. Pilots have cockpits. Language models need their own purpose-built interfaces.

The companies that figured this out early — and the engineers who are figuring it out now — aren’t winning because they found the magic model. They’re winning because they built the environment that lets the model do what it was already capable of doing.

The model is almost irrelevant. The harness is everything.

Recommended reading

If you’re designing agent harnesses and want to understand the distributed systems patterns underneath — context management, state isolation, feedback loops, and fault tolerance — Designing Data-Intensive Applications by Martin Kleppmann covers every architectural principle that matters.

Sources: SWE-agent benchmarks (3.97% to 12.47% with ACI): Yang et al., “SWE-agent: Agent-Computer Interfaces Enable Automated Software Engineering,” NeurIPS 2024. Claude Code satisfaction stats (46% vs 19% vs 9%), Stripe 1,300 PRs/week, Perplexity model distribution data: cited from Deb Acharjee, “THE CI/CD OF CODE ITSELF,” Medium, March 2026. Harness Engineering debate, Cursor $50B valuation: Latent Space “[AINews] Is Harness Engineering real?”, March 2026. MCP cognitive overload / Skills architecture: consistent with publicly documented Anthropic architecture. MIT Missing Semester / 200-line harness: cited from secondary source, not independently verified from primary. All code examples are original and illustrative.

No financial, career, or technical advice implied. The author has no commercial relationship with any platform or vendor mentioned.

Comments

Loading comments...